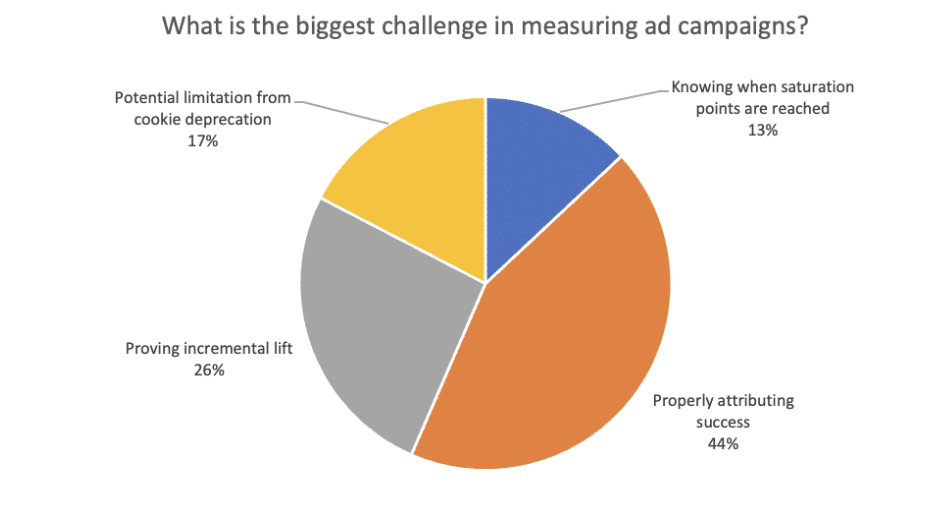

In mid-March, we ran a webinar on how to model your marketing mix with machine learning. During the webinar, we polled the audience to find out their most significant challenge when measuring their ad campaigns. The poll results, as shown below, indicate that 44% of the audience felt that properly attributing success was the biggest challenge, followed by 26% who said that proving incremental lift was the biggest issue.

Poll results from our “Model Your Marketing Mix With Machine Learning” webinar

That means 70% of the audience felt that properly attributing success and proving incremental lift were their biggest challenges. So, we wanted to provide an in-depth view of these topics and show how AI and predictive analytics can help overcome these challenges.

In a previous blog post, we covered the attribution challenge; here, we’ll cover how AI and machine learning can help prove incremental lift for an ad campaign, channel, or media partner

What do we mean when we say “incremental results”?

When we talk about incremental results in the context of marketing performance, we’re referring to the change in an outcome attributable to a specific intervention or action. In other words, incremental results are the additional gains or benefits we achieve by taking a particular action compared to what we would have achieved if we had done nothing.

This concept is particularly relevant in performance marketing campaigns, where marketers are constantly looking for ways to drive more results, whether conversions, sales, or revenue. Measuring incremental results is essential for determining the effectiveness of different marketing strategies and tactics.

By measuring the incremental impact of a particular campaign or action, we can assess its true value and make informed decisions about allocating resources. This approach allows us to optimize our marketing efforts and maximize our return on investment (ROI). Focusing on incremental results is critical to continuous improvement and growth in any marketing organization.

Why is it difficult to measure the incremental lift of an ad campaign?

Incrementality goes beyond measuring conversion rates, ROI, ROAS (return on ad spend), or other metrics. It captures lift that is uniquely attributable to the campaign under evaluation. Because incrementality is such a complex metric, it can be hard to measure due to several factors.

One of the main challenges is the presence of confounding variables that can influence the campaign’s outcome. These variables could be external factors, such as changes in the economy, seasonality, or the competitive landscape, or internal factors, such as changes in pricing or product features. If these variables are not properly controlled for, it can be challenging to attribute any changes in the outcome to the specific intervention being evaluated.

The most common challenge in measuring incremental results is the difficulty of isolating the effect of the intervention from other factors that may be driving the outcome. For example, marketers may launch a campaign simultaneously with other initiatives, such as changes in the sales team or new product launches. Or, a marketing campaign could be launched right when the website gets a refresh. If the impact of the marketing campaign is not measured separately from these other initiatives, it can be difficult to determine the true incremental impact of the campaign.

By carefully controlling for variables, companies can obtain a more accurate assessment of the incremental impact of their marketing campaigns.

How can you measure incrementality in marketing?

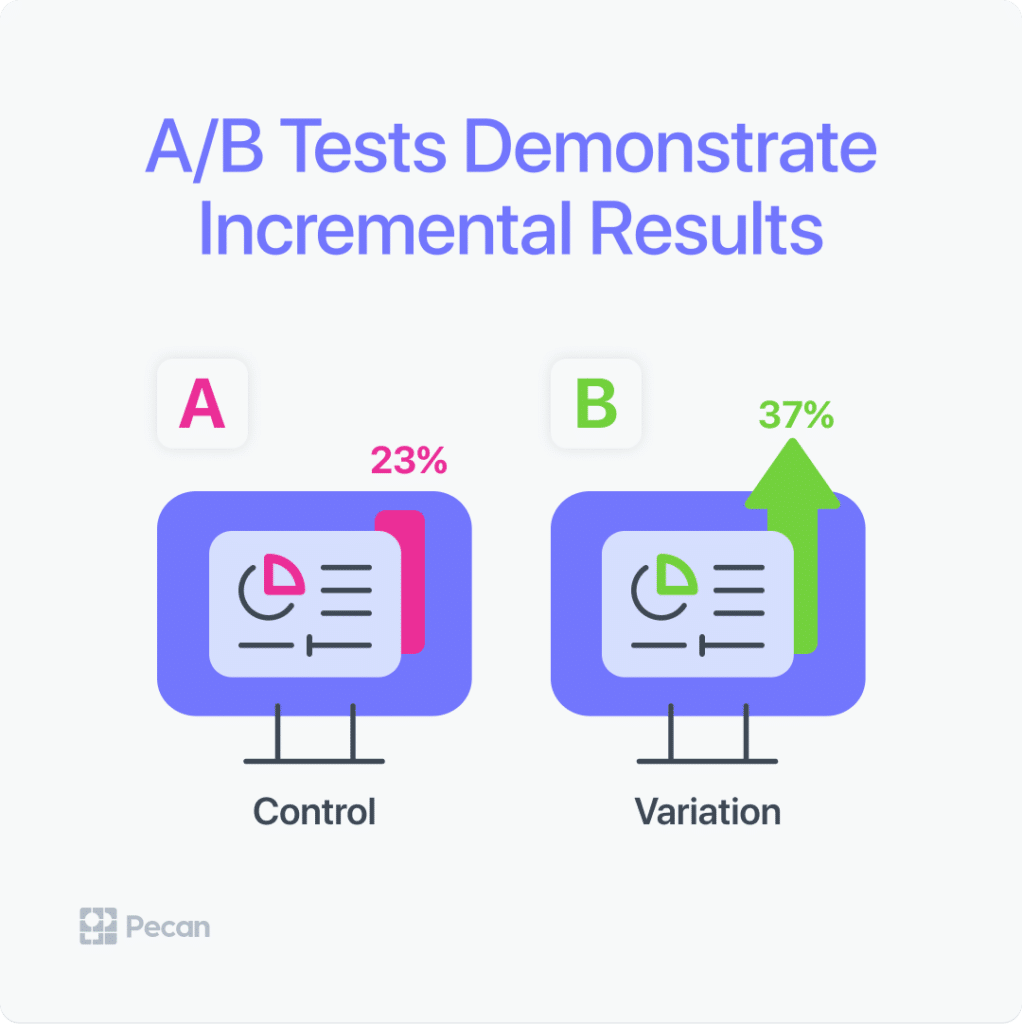

There are several methods that can be used to measure incrementality in marketing. One of the most rigorous and widely accepted methods is the randomized controlled experiment, also known as an A/B test. In an A/B test, a randomly selected group of individuals is exposed to a marketing campaign, ad treatment, web page, etc, while another randomly selected group is not. By comparing the outcomes of the two groups, we can estimate the incremental impact of the campaign, treatment or page.

A/B tests are an excellent way to understand the real results of marketing efforts.

Another approach is the quasi-experiment, which involves comparing the outcomes of an exposed group to a control group that is similar in all respects except for the fact that they did not receive the intervention. This method can be useful when it is not feasible or ethical to randomly assign individuals to a treatment group.

Observational studies can also be used to measure incrementality, although they are generally considered less rigorous than randomized controlled experiments or quasi-experiments. Observational studies involve comparing the outcomes of individuals who were exposed to the intervention to those who were not, but without the use of a control group. This approach can be useful when it is difficult to establish a control group, but it’s important to carefully control for confounding variables.

It’s also important to consider the time lag between the intervention and the outcome, as the full impact of a marketing campaign may not be realized until several months after the campaign has ended.

In addition, it is important to ensure that the sample size is large enough to provide a statistically significant result, and to test the statistical significance of the results to ensure that they are not due to chance and can be generalizable to a larger audience. By carefully measuring incrementality in marketing, companies can optimize their marketing efforts, scale their findings, and maximize their ROI.

How is incrementality testing relevant to predictive analytics?

Incrementality testing is relevant to predictive analytics because predictive models are often used to optimize marketing campaigns by predicting which customers are most likely to respond positively to a campaign. However, predictive models can only make predictions based on past data, and they cannot predict the effect of a specific campaign on a specific customer.

Incrementality testing provides a way to measure the actual impact of a campaign on a specific group of customers, which can be used to validate and improve the predictive models used to optimize the campaign.

By comparing the behavior of the test group to the control group, incrementality testing can help determine whether a particular campaign is actually driving incremental sales or whether the sales would have occurred anyway. This information can be used to adjust the predictive models and improve the accuracy of the predictions.

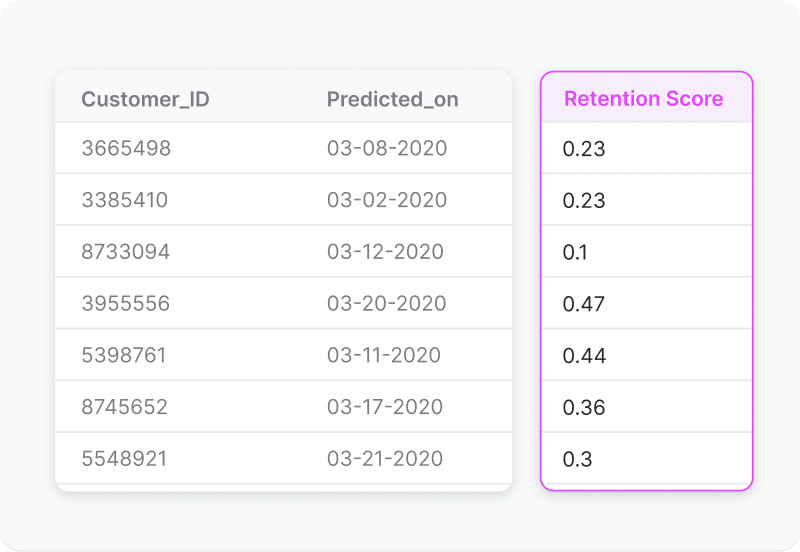

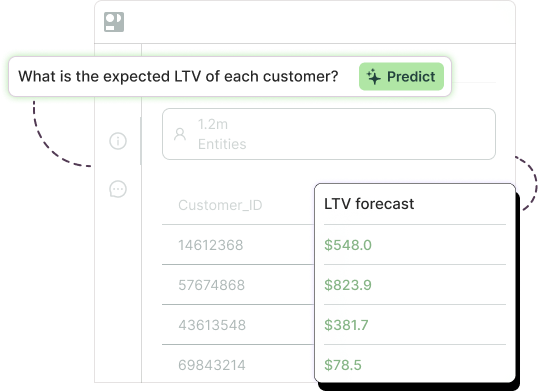

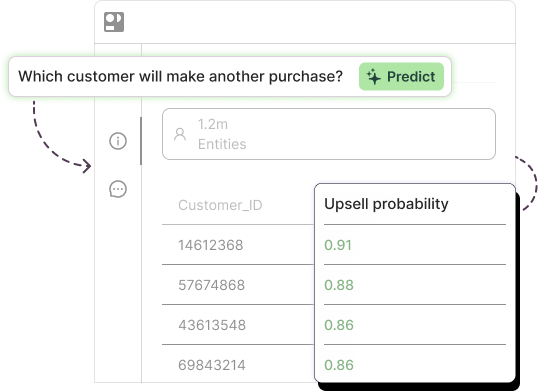

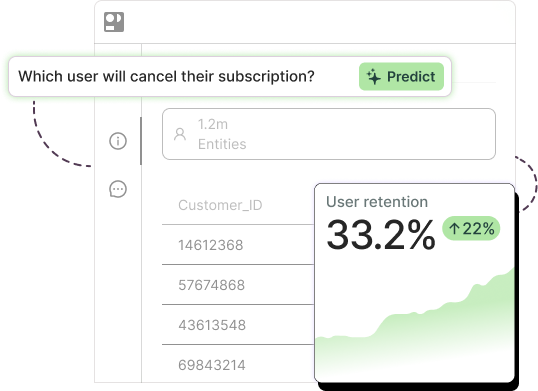

Machine learning algorithms can also identify the factors that are most strongly associated with incremental impact, such as demographic characteristics, purchase history, or online behavior. For example, Pecan’s platform is designed to identify these factors down to the user level.

Pecan’s platform generates individual-level predictions and provides insights into the influences on predictions.

Overall, predictive analytics can play a valuable role in supporting incrementality testing in marketing by helping to identify promising campaigns, estimate incremental impact, and provide insights into the drivers of success. By leveraging these techniques, companies can optimize their marketing strategies and achieve better results with less waste.

Find out more about how Pecan can help you boost and prove real marketing impact. Contact us to set up a time to chat about Pecan and your marketing team’s needs.