In a nutshell:

- Explainable AI shows how decisions are made in predictive models

- Not all AI is explainable, but it's crucial for transparency and trust

- Global and local explanations help businesses understand AI predictions

- Pecan AI offers hyper-granular explanations for customer-level predictions

- Accessible, explainable AI can provide valuable insights for business teams

If you’ve ever gotten a letter from a bank that explained how different financial issues influenced a credit application, you’ve seen explainable AI at work. A computer used math and a set of complex formulas to calculate a score. Then, with that score, it determined whether to approve or deny your application. In making that decision, some data points were more or less important. Maybe your long history of on-time payments or your low amount of debt contributed to your application’s approval.

Similarly, explainable AI shows humans how it arrived at a decision by evaluating different inputs in its calculations. To be sure, this idea might sound obscure or only relevant to the most hardcore data people. However, explainable AI brings significant business advantages that anyone interested in applying AI should consider.

Not All AI is Explainable

Humans build AI systems, but not even those builders can always interpret precisely how an AI comes up with a specific decision or output. Those kinds of AI systems are sometimes called “black boxes” or “opaque” because it’s hard to know what happened inside them. They made a decision or spit out a number, but no one can tell what led to that result.

However, many AI processes are built so that humans can understand how they arrive at their conclusions. These are called “explainable.” In some industries and countries, there’s growing interest in regulation to require explainability of AI in certain areas, such as financial services, human resources, and health care. Marketing can also play a role in responsible AI governance. That’s especially true when explainable analytic methods are used in marketing processes.

What Explainable AI Provides for Business

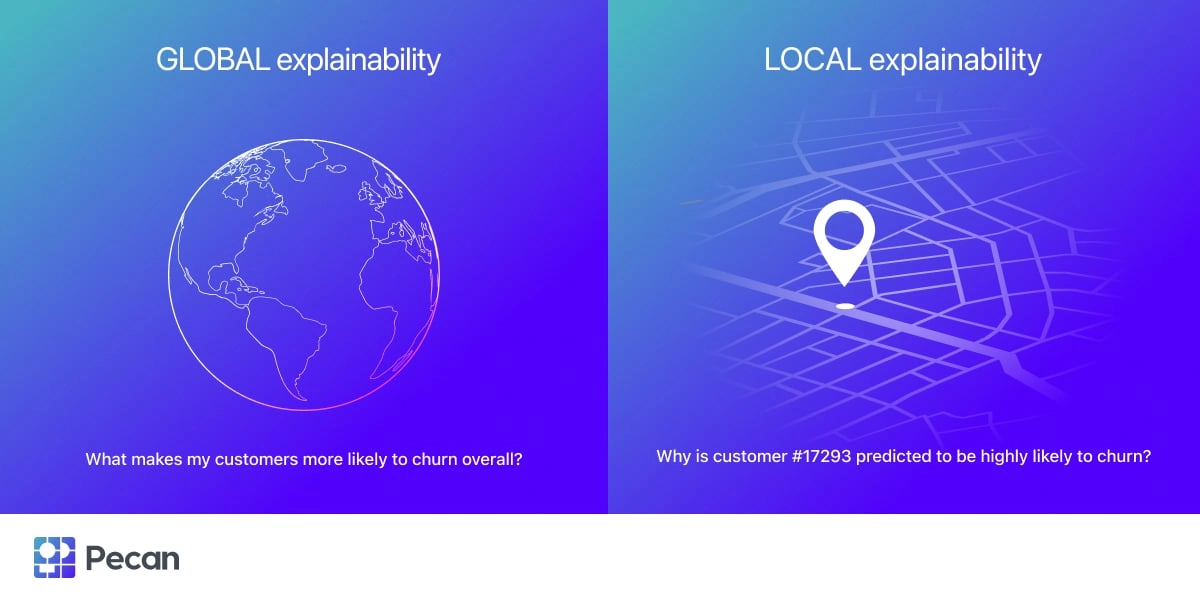

Explainable AI can reveal both what factors were most important to the system overall and which factors were most important to any specific decision or output. Two types of explanations may be available:

- Global explanations. For example, you might want to predict customer churn. You might learn that customer service interactions and website visits are the top predictors of churn overall, across all your customers.

- Local explanations. While a global explanation for an AI’s predictions is helpful, it’s even more valuable to pinpoint reasons for a single prediction. In the churn example, why is a particular customer predicted as highly likely to churn? Their personal reasons might be reduced activity and late payments. Knowing those reasons lets you decide on the right action to retain that customer — or to let them churn.

Global vs. local explainability

Ideally, an explainable AI system predicting churn would offer global and local explanations. You could receive detailed insights into both broader issues around churn and specific, customer-level explanations. With those explanations in hand, you can develop comprehensive strategies to reduce churn. Additionally, you can plan personalized outreach to each customer to try to retain them.

AI is increasingly important to so many businesses. Explainable AI also offers a window into AI’s workings, building trust in its recommendations.

Pecan’s Explainability Features

Pecan AI’s low-code predictive analytics platform helps you automatically prepare data for AI, build and evaluate machine learning models, and deploy models into production. All that happens in far less time than traditional data science practitioners and techniques require, leading to much faster ROI.

Pecan’s platform offers hyper-granular explanations of customer-level predictions—local explanations that empower business teams to plan targeted, customized outreach. Additionally, specific scores for churn, LTV, upsell/cross-sell, and other models let you segment and define groups of customers for targeted offers, promotions, or messages.

While AI might seem mysterious and magical, explainable methods and the right tools can make its decisions more transparent, trustworthy, and valuable to business teams.

Get in touch to learn more about how Pecan’s low-code predictive analytics platform offers accessible, explainable AI with real business impact.