In a nutshell:

- Data quality is crucial for decision-making and AI models.

- Poor data quality can lead to financial losses and operational inefficiencies.

- Data leaders play a pivotal role in championing data quality initiatives.

- High-quality data is essential for strategic decision-making and predictive AI models.

- Implementing data quality tools and techniques is vital for maintaining data integrity in large enterprises.

Would you cook a recipe for dinner using a spoiled ingredient?

You know where this is headed. One lousy ingredient makes the whole meal less than appetizing. And unfortunately, using bad data to fuel your decision-making processes and AI models means you won’t enjoy the results you get.

Data quality needs to be a major focus at any company today that’s striving for data-driven strategy and decisions, as well as those seeking to implement cutting-edge AI for a competitive advantage.

Data leaders, including data analytics managers, directors, and chief data officers, play a critical role in ensuring that the data used within their organizations is accurate, reliable, and up-to-date.

Failure to ensure that the data being used by your models is of the highest quality produces poorer results, potentially causing a cascade of problems that hamper your operations.

Are you still hungry to learn more about data quality management? (Sorry if we ruined your actual appetite with that intro.)

This blog post reviews the fundamentals of data quality management and why enterprises must keep their data in line. We’ll look at the potential impacts and risks of poor data quality.

Join us to savor a journey through the importance of data quality management and the best practices for maintaining high-quality data within an organization.

Photo by Priscilla Du Preez on Unsplash

The Fundamentals of Data Quality Management

Data can offer an organization a vast number of actionable insights. Properly leveraging your data means facilitating strategic decision-making processes, predictive analysis, trend spotting, and more.

Data quality management ensures this valuable asset is of the highest caliber, meaning it’s accurate, timely, relevant, complete, and consistent.

Data quality management comprises various processes, including data cleaning, integration, and governance. These processes can:

- detect and correct irregularities within data

- handle redundancies

- ensure consistency

- maintain overarching control over the entire data lifecycle

These processes require implementing tools, methodologies, and solutions to manage data effectively and ensure it is fit for its intended uses.

Moreover, comprehensive data quality management also involves establishing clear data governance policies and ensuring strict adherence within the organization. These policies guide handling sensitive data, managing data privacy, and ensuring compliance with regulatory requirements.

Data isn’t just a byproduct of business activities today. It’s become a critical driver of innovation, competitiveness, and growth.

As a result, you’re on the hook for ensuring data quality. It’s a strategic necessity, not an operational choice.

The Cost of Poor Data Quality

Poor data quality can have a substantial impact on any enterprise. It’s not just about the potential for incorrect insights or misguided strategies, but also the financial cost of these data problems. According to a 2017 study from Gartner, poor data quality can cost businesses an average of $15 million per year in losses. That’s more than enough reason to make sure you’re getting things right.

Impact on Business Decisions

Poor data quality can lead to inaccurate business intelligence, which guides leaders into making decisions based on poor-quality data, and may lead to inaccurate forecasts, ineffective marketing strategies, and impractical business goals.

The unfortunate consequences can include wasted resources, lost opportunities, and—perhaps the most dangerous in today’s hypercompetitive market—decreased customer satisfaction.

Impact on Operational Efficiency

Low-quality data can significantly affect operational efficiency. It can lead to inefficiencies in workflow, increased manual effort to correct errors, and a potential slowdown in business processes. These are not how you want to characterize your business processes, nor do you want to waste time and money better spent in other areas. Investing in data quality pays off in efficiency and better results.

Impact on Compliance and Risk Management

Data is often used for compliance reporting and risk management. Poor data quality in these areas can lead to regulatory fines and an increased risk of breaches. Ensuring data quality can help minimize these risks.

Impact on Customer Relationships

Poor data quality can negatively impact customer relationships. Incorrect customer data can lead to communication issues, reduced customer satisfaction, and a potential loss of business. Maintaining high-quality customer data is crucial in strengthening the overall customer experience.

Photo by Andrew Seaman on Unsplash

What Are the 5 Measures of Data Quality?

Five measures are commonly used to assess data quality:

- Accuracy: Refers to how correct and precise the data is.

- Completeness: Indicates whether all necessary data is present.

- Reliability: Focuses on the consistency and dependability of the data.

- Relevance: Determines if the data is applicable and valuable for its intended purpose.

- Timeliness: Reflects how current the data is and if it is up-to-date.

As you can tell, these fundamental characteristics all play a crucial role in data-driven decisions. They are also all valuable metrics for judging the quality of the data you use to build predictive AI models.

Making sure a small database is good to go on these measures? That’s relatively easy. However, bringing data across an enterprise up to speed on all five of these criteria is a significant challenge.

Ensuring Data Quality at Scale

Scaling up data management within a large enterprise is daunting, and maintaining data quality as the quantity of data increases is even more challenging. As your data volume grows, it’s vital not to let the quality slip.

Let’s explore how to effectively manage data quality at scale, focusing on the challenges faced by large enterprises and the crucial role of data leaders in championing data quality initiatives.

Challenges and Solutions for Maintaining Data Quality in Large Enterprises

The scale at which data is generated and processed in large enterprises presents unique challenges to maintaining data quality. These challenges include increased system complexity, diversity in data types, and the need for higher processing speeds.

One primary solution involves implementing automation in data quality checks. Automated processes can handle larger volumes of data and deliver results faster than manual efforts.

Also, investing in a robust data management system capable of handling high data volumes without compromising on data quality can be a significant aid to tackling this issue.

How Data Leaders Can Help in Championing Data Quality Initiates

As the guardians of data integrity, data leaders are crucial in driving quality initiatives within an organization. They’re responsible for setting data quality standards, devising strategies for data quality management, and ensuring compliance throughout the enterprise.

Data leaders should be proactive in promoting a data-oriented culture, where quality data is valued by all employees. They should also work with different business units and the IT department to align data quality goals with overall business objectives.

Moreover, data leaders should adopt a continuous improvement mindset, seeking to refine and enhance data quality initiatives to keep up with evolving business needs and industry standards.

Maintaining data quality at scale requires a concerted effort from the entire organization, guided and motivated by its data leaders. It’s a strategic approach to data management that ensures the right data is available at the right time, ensuring optimal decision-making and the effective functioning of AI models.

As with any change, everything works from the top down, so data leaders must serve as an example and teach those standards to those working under them.

Photo by Kelly Sikkema on Unsplash

Data Quality Management in Enterprise Decision-Making

The quality of your organization’s data makes a major difference in its ability to make decisions. As you go forward, consider the kinds of decisions your company makes and the quality of data it uses to inform those decisions.

Quality Data in Strategic Decision-Making Processes

Data quality management is crucial to an enterprise’s strategic decision-making processes. High-quality data provides a solid foundation for insights and analytics, empowering an enterprise to make informed business decisions accurately and quickly.

Why Data Quality for Effective Decision-Making Is Crucial

Having quality data at your fingertips can significantly enhance the decision-making process. It gives decision-makers a comprehensive overview of the business operations, customer behavior, and market trends. This, in turn, leads to formulating strategies that are not just based on intuition but are rooted in facts and figures.

High-quality data can also highlight potential gaps or challenges hindering your business growth, thus empowering you to tackle them head-on, leading to improved business efficiency and profitability.

Equally important, good-quality data can unveil hidden opportunities for expansion and innovation, offering a competitive edge in the rapidly changing marketplace.

Data Quality Management for Predictive AI Models

High-quality data is a boon no matter what you use it for, but it’s especially critical when training predictive AI models. If you’re planning to use your data to build predictive AI models, consider the following first:

Quality Data in AI Model Training and Validation

In the context of AI predictive models, the quality of the data used for model training and validation contributes significantly to the model’s accuracy. High-quality datasets are vital in developing robust, reliable models that accurately predict trends and make apt recommendations. Conversely, poor data quality can lead to skewed or biased models and disruptive errors in prediction.

Best Practices for Ensuring Data Quality in AI Projects

Best practices for ensuring data quality in AI projects include careful data selection and cleansing, regular model review and maintenance, and automated data validation. It is also crucial to have a diverse and representative dataset for training AI models to prevent bias in predictions.

All of these factors keep low-quality data out of your training sets, allowing your models to train more effectively and produce more trustworthy predictions. In turn, the business strategy and decisions based on the model’s predictions will produce better outcomes.

Best Practices for Data Quality Management

As you start to take data quality more seriously, there are a few best practices you can use to ensure the best data possible makes it into your data sets.

It’s important to note that these processes are not one-time activities. Keeping data accurate, consistent, and reliable requires regular checks and updates. Consider adopting a lifecycle approach to data quality, treating it as an ongoing process rather than a one-off project.

Photo by Orgalux on Unsplash

Data Profiling and Cleansing Techniques

Data profiling and cleansing are critical components of data quality management. Data profiling involves an in-depth examination of each dataset, including data type, structure, and content, to ensure quality and consistency. Data cleansing refers to identifying and correcting or removing corrupt or inaccurate records.

Data matching is also important since it identifies duplicate entries or links related entries within or across datasets. Data enrichment is a cleansing technique in which the value of an original dataset is enhanced by appending related attributes from other data sources.

All of these techniques help maintain data accuracy, consistency, and reliability.

Implementing Data Quality Tools and Technologies

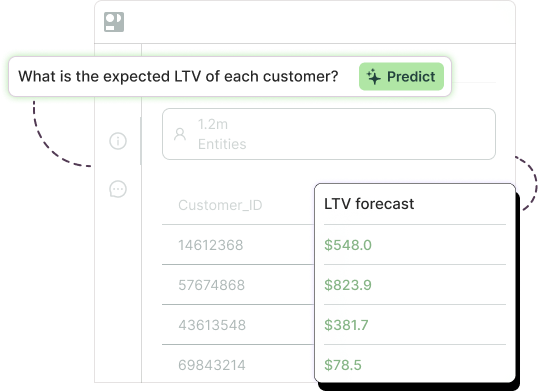

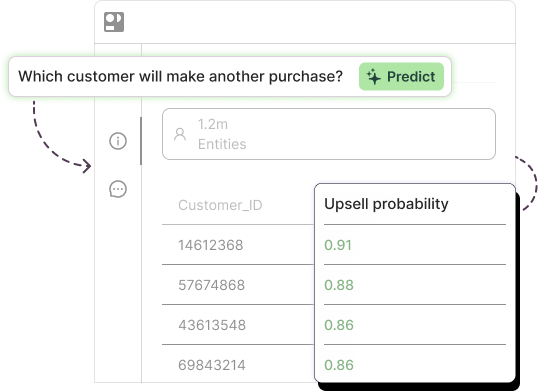

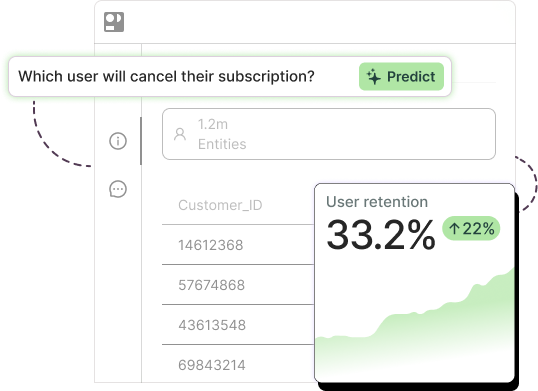

Fortunately, these processes are no longer tediously carried out by hand — an impossibility in today’s big data environment. Today’s tools provide features like automated data profiling, cleansing, migration, and integration, which help manage data quality efficiently while saving time and resources. (Pecan’s Predictive GenAI-powered predictive analytics platform also provides some automated data cleaning and preparation, making the path to an AI model even quicker.)

These tools can detect data anomalies and inconsistencies early on, preemptively addressing potential issues without needing long and tedious human review.

Prioritize Data Quality Management in Your Organization

Data quality management is a vital aspect of any enterprise. High-quality data contributes to accurate decision-making, improves business outcomes, and leads to the creation of successful AI predictive models. It’s essential to prioritize data quality management and take strategic steps toward improving and maintaining it.

Ultimately, the responsibility of driving data quality initiatives falls on the shoulders of data leaders. They should stay at the forefront, championing data quality and integrity within the organization.

Prioritizing data quality management can open many opportunities for growth, sustainability, and innovation for enterprises in this data-driven era, so it’s well worth taking those first steps to get the ball rolling.

Let Pecan help you expedite your data quality management and predictive AI projects. Learn more about our automated data preparation capabilities and intuitive, easy-to-use Predictive GenAI. Get a guided tour or try a free trial today.