Two years ago, the question most business teams asked was: “Should we build an AI model?”. In 2026, that question has shifted to something more urgent: “How quickly can we get one running?”

And honestly, the answer depends entirely on which path you take.

The landscape for building predictive models has changed dramatically. AI agents now handle tasks that used to require entire data science teams. No-code platforms have matured past their early awkward phase. Open-source frameworks keep getting more powerful. And yes, you can still write everything from scratch in Python if that’s your thing.

We’ve broken down five distinct approaches to building your own AI model, ranked from easiest to most complex. Each one comes with real cost data, honest tradeoffs, and a clear picture of who it’s actually built for. Whether you’re a marketing ops manager trying to predict customer churn or an ML engineer fine-tuning gradient-boosted trees, there’s a path here for you.

Let’s get into it.

Quick Refresher: What’s an AI Model, Really?

We won’t belabor this. If you’ve made it to 2026 without hearing the term, congratulations on your digital detox 🙂

An AI model is a program trained on historical data to recognize patterns and make predictions about new, unseen data. Feed it past customer behavior, and it learns to flag who’s likely to leave next quarter. Show it years of sales data, and it spots demand patterns your team would miss.

The magic isn’t in the model itself. It’s in what your team does with the predictions it produces. If you want the deep dive on model types, algorithms, and methodology, our complete guide to predictive modeling covers all of that in detail.

What matters for this post is the “how do I actually build one” part. So let’s compare your options.

The 5 Methods, Ranked

Here’s a quick snapshot before we dig into each approach:

| Method | Skill Level Needed | Time to First Model | Year 1 Cost Range | Best For |

| AI Agent Platforms | Low | minutes to days | $12K – $60K | Business teams who want predictions, not projects |

| No-Code / Low-Code Platforms | Low to moderate | days to weeks | $600 – $60K | Analysts, rapid prototyping, SMBs |

| Cloud AutoML Services | Moderate | 1 – 4 weeks | $15K – $120K (+ team costs) | Technical teams already in a cloud ecosystem |

| Open-Source AutoML Libraries | Moderate to high | 3 – 8 weeks | $5K – $50K (+ significant people costs) | Data scientists who want automation with control |

| Custom Code (Traditional Programming) | High | 2 – 6+ months | $330K – $1M+ | Research teams, novel architectures, full control |

Method 1: AI Agent Platforms (Easiest)

This category barely existed a year ago. Now it’s reshaping how businesses approach predictive analytics entirely.

AI agent platforms use autonomous agents to handle the full predictive workflow on your behalf. You bring a business question, “Which customers are most likely to churn next quarter?” or “What will demand look like for this SKU in March?”, and the system figures out the rest. Data preparation, feature engineering, model selection, validation, deployment. All of it.

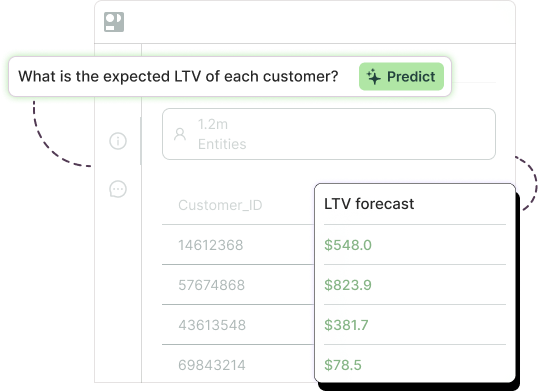

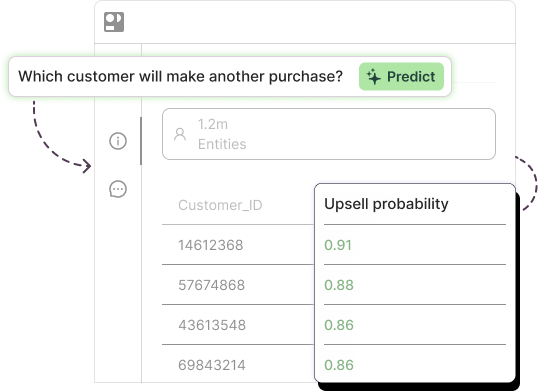

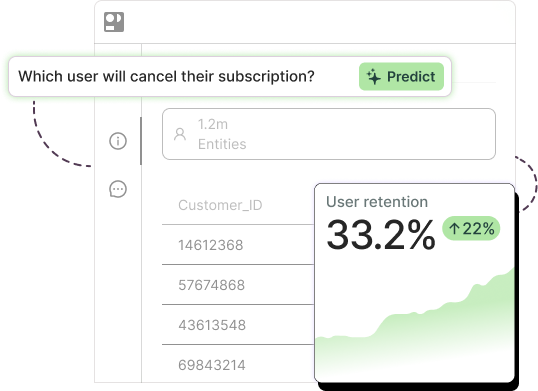

Pecan’s Predictive AI Agent, launched in January 2026, works exactly this way. You ask a question in plain English, and the agent interprets your data’s unique structure, builds a validated model, and delivers predictions directly into the tools your team already uses, whether that’s Salesforce, HubSpot, or your data warehouse.

See it in action:

Watch the Pecan AI Product Tour

The reason this approach ranks as “easiest” isn’t just the interface. It’s that the agent handles the parts most teams get stuck on: cleaning messy data, choosing the right algorithm, validating that predictions are actually reliable, and catching common pitfalls like data leakage or overfitting. Built-in guardrails mean you’re not just getting a model. You’re getting one you can trust.

Who it’s for: Marketing ops, revenue ops, customer success, finance, and planning teams who need predictions to inform real decisions. You don’t need a data science background. You need a business question and access to your data.

What it costs: Enterprise SaaS pricing, typically $950 – $5,000+/month depending on usage and scale.

The tradeoffs: You’re working within the platform’s framework, which means less granular control than building from scratch. For highly specialized or novel modeling tasks (think: custom neural architectures for genomics data), you’ll still want a dedicated data science approach. But for the business prediction use cases that drive 90% of real-world ROI, like churn, lead scoring, lifetime value, and demand forecasting, agent platforms get you there faster and cheaper than any alternative.

This isn’t just Pecan’s bet, by the way. DataRobot recently pivoted its entire platform to an “Agent Workforce” model. H2O.ai is converging predictive and generative AI through agent-based workflows. Gartner predicts that 40% of enterprise applications will incorporate task-specific AI agents by the end of 2026, up from less than 5% in 2025. The shift is happening fast.

Method 2: No-Code / Low-Code Platforms

If AI agent platforms are the newest kid on the block, no-code tools are the slightly older sibling who’s finally hit their stride.

These platforms let you upload data (often just a CSV), pick what you want to predict, and get a trained model back in minutes. No Python. No command line. Some require SQL knowledge, others don’t even go that far.

The main players in 2026 include Obviously AI (plans from free to $999/month, great for quick classification and regression tasks), MindsDB (open-source core with a unique SQL-based interface for ML operations), and AWS SageMaker Canvas (Amazon’s no-code offering that plugs into the broader SageMaker ecosystem at $1.90/hr of workspace time). Microsoft’s AI Builder, integrated with Power Platform, runs about $500/month and works well for organizations already deep in the Microsoft stack.

If you’re exploring this route, our breakdown of how to choose the best AutoML solution walks through five essential criteria worth evaluating before you commit.

Who it’s for: Business analysts, ops teams at SMBs, and anyone who needs a working model without waiting months for a data science team to deliver one.

What it costs: Free (for limited open-source options) up to ~$1,000/month for mid-tier plans. Year 1 total cost of ownership can be as low as $600 for simple use cases, though enterprise deployments push into the $30K – $60K range.

The tradeoffs: Customization is limited. If the platform doesn’t support your specific model type or data structure, you’re stuck. Prediction accuracy on complex, high-dimensional datasets may lag behind what a skilled data scientist could achieve with custom code. And as your data volume grows, costs can scale in ways that aren’t always transparent upfront.

Method 3: Cloud AutoML Services

The big three cloud providers, Google, Amazon, and Microsoft, each offer managed AutoML services that automate significant chunks of the model-building process while giving technical users more control than pure no-code tools.

Google Vertex AI has evolved into a comprehensive ML platform with AutoML for tabular, image, text, and video data. Training runs for tabular models cost roughly $21/node-hour, and the broader platform now integrates Gemini for generative AI workflows plus a new Agent Engine for deploying AI agents at scale. New users get $300 in free credits to experiment. The main criticism? Pricing complexity. It’s genuinely difficult to predict what your bill will look like until you’re already running.

AWS SageMaker combines Canvas (no-code, $1.90/hr) with Autopilot (automated model exploration) and the full SageMaker Studio for hands-on ML engineering. Training instance costs range from $0.05/hr for CPU to $22/hr for an 8×A100 GPU cluster. The new Unified Studio brings data engineering and ML into a single IDE, which is a welcome simplification for teams that were previously juggling five different AWS consoles.

Azure Machine Learning takes a slightly different tack with its Designer tool (drag-and-drop pipeline building) and tight integration with Microsoft Fabric and Azure OpenAI. AutoML itself is free. You just pay for the underlying compute VMs, which start around $0.36/hr. The Responsible AI dashboard, with built-in bias detection and fairness metrics, is a standout feature that competitors haven’t matched as comprehensively.

Who it’s for: Technical teams that already live in one of the major cloud ecosystems and have at least one or two people comfortable with ML concepts. You don’t need to be a deep learning researcher, but you should know what a training job is.

What it costs: Compute charges typically run $6K – $60K/year depending on usage, but don’t forget that someone on your team needs to manage the process. The real year 1 TCO, including at least one technical hire or contractor, often lands between $80K – $200K.

The tradeoffs: These platforms are powerful but sprawling. Each offers dozens of services, pricing tiers, and configuration options. For a marketing ops team that just wants to score leads or predict churn, it’s like using an aircraft carrier to cross a lake. Works, sure. But there are simpler boats.

Method 4: Open-Source AutoML Libraries

Here’s the sweet spot for data scientists who want automation without giving up control.

Open-source AutoML libraries handle the tedious parts of model building, algorithm selection, hyperparameter tuning, ensembling, while letting you customize everything else. They sit between “the cloud platform does it all” and “I’m writing every line from scratch.”

The standout in 2026 is AutoGluon, backed by AWS, which consistently tops benchmarks and has beaten 99% of Kaggle participants in accuracy comparisons. It handles tabular, text, image, and time-series data with minimal configuration. FLAML (from Microsoft Research) is the lightweight alternative: incredibly efficient, often finding near-optimal models with a fraction of the compute. And PyCaret remains the lowest-barrier option, wrapping complex ML workflows into as few as 3-5 lines of Python.

For the gradient-boosted model workhorses that dominate tabular prediction tasks, XGBoost (now at version 3.2) and LightGBM (4.6) continue to be the gold standard. They consistently outperform deep learning on structured business data, which is exactly the kind of data most business prediction tasks involve.

Who it’s for: Data scientists and ML engineers who want speed without sacrificing explainability or control. Also great for teams evaluating multiple approaches before settling on a production solution.

What it costs: The software is free. The hidden cost is people. You need at least one experienced data scientist, and realistically a data engineer too, to manage infrastructure, pipelines, and monitoring. Year 1 TCO runs $5K – $50K for compute and tooling, plus $200K – $400K in salary costs for the team running it.

The tradeoffs: “Free” is misleading when 80-85% of your total cost is headcount. You’re responsible for data pipelines, model monitoring, retraining schedules, and deployment infrastructure. None of that comes out of the box. For a deeper look at automating the data science workflow to reduce this overhead, we’ve written extensively about what can (and can’t) be automated.

Method 5: Custom Code / Traditional Programming

The original approach. Still the right one in certain situations.

Building a model from scratch means writing your own data pipelines, choosing algorithms manually, tuning hyperparameters, building validation frameworks, and managing deployment infrastructure. It’s the most work. It’s also the most flexible.

The framework landscape in 2026 is dominated by PyTorch, which now claims over 55% of production ML workloads. The PyTorch Foundation has expanded into an umbrella organization hosting vLLM, DeepSpeed, and Ray. TensorFlow remains strong in enterprise production environments, and Keras 3 now runs as a multi-backend framework supporting JAX, TensorFlow, PyTorch, and OpenVINO. scikit-learn hit version 1.8 with a milestone feature: native Array API support that enables GPU computation through PyTorch and CuPy arrays. That’s a big deal for the workhorse library of traditional ML.

For the full walkthrough on this approach, our guide to building, training, and deploying a machine learning model breaks it into five manageable steps.

Who it’s for: Research teams pushing the boundaries of what’s possible. Companies with genuinely novel problems that don’t fit into any existing platform’s framework. Teams building proprietary IP around their modeling approach.

What it costs: The most expensive option, though not because of software (most tools are free and open-source). The cost is in people. A minimum viable data science team of 3-4 people runs $660K – $790K/year fully loaded. Add infrastructure, tooling, and the 6-12 months it takes to get from “hired the team” to “model in production,” and year 1 TCO easily exceeds $700K.

The tradeoffs: Total control comes with total responsibility. Every data quality issue, every model drift, every deployment headache is yours to solve. Sixty percent or more of project time typically goes to data engineering and preparation, not the actual modeling work. And turnover in data science roles runs 15-25% annually, so you may find yourself rebuilding institutional knowledge more often than you’d like.

The Real Cost of Building an AI Model in 2026

Let’s put actual numbers on this. One of the biggest mistakes teams make is underestimating the total cost of ownership, especially the hidden costs around people, infrastructure, and ongoing maintenance.

| Approach | Upfront Costs | Ongoing Monthly | Year 1 TCO | Time to First Model |

| AI Agent Platforms (e.g., Pecan) | $0 – $2K | $950 – $5,000 | $12K – $60K | minutes to days |

| No-Code / Low-Code | $0 – $2K | $50 – $5,000 | $600 – $60K | days to weeks |

| Cloud AutoML (Vertex, SageMaker, Azure) | $0 – $5K | $1K – $10K | $15K – $120K (+ team costs) | 1 – 4 weeks |

| Open-Source AutoML (AutoGluon, FLAML) | $5K – $25K (infra) | $25K – $35K (mostly people) | $200K – $450K | 3 – 8 weeks |

| Custom Code (PyTorch, scikit-learn) | $50K – $150K (recruiting + tools) | $55K – $65K | $700K – $1M+ | 2 – 6+ months |

A few things jump out from this table.

First, there’s a massive gap between the methods that require hiring specialized talent and the ones that don’t. Cloud AutoML looks affordable until you realize someone still needs to manage the platform, and that “someone” costs $130K – $200K/year.

Second, time to first model matters more than most teams realize. If you need to demonstrate ROI by next quarter’s review, the methods at the bottom of this list won’t get you there. A six-month runway to first deployment is fine for a long-term strategic investment. It’s a problem when your VP needs churn numbers by March.

Third, the “open source is free” narrative deserves some serious pushback. Visible costs (compute, storage, tooling) represent only about 15-20% of what you’ll actually spend. The other 80-85% is people. And according to industry research, 68% of organizations underestimate what data preparation and retraining will cost them.

So, Which Method Is Right for You?

The honest answer: it depends on what you’re trying to accomplish and what resources you already have.

If you’re a business team without data science support and you need predictions that plug into your existing workflows, an AI agent platform is the fastest path to real results. You skip the infrastructure headaches and go straight to the business outcomes that matter.

If you’re exploring and prototyping before committing to a larger investment, no-code platforms give you a low-risk way to validate whether predictive modeling will actually move the needle for your use case.

If you already have technical talent and a cloud infrastructure, cloud AutoML services let you scale ML within your existing ecosystem without reinventing the wheel.

If you’re a data scientist who wants more control over the process but doesn’t want to manually tune every hyperparameter, open-source AutoML libraries hit the right balance.

And if you’re building something genuinely novel, with unique data types, custom architectures, or research-grade requirements, traditional programming remains the most flexible option, provided you have the team and budget to support it.

Whatever path you choose, the most important thing is starting with a clear business question. The best model in the world is useless if it’s answering the wrong question or if its predictions never make it into the hands of people who can act on them.

That’s actually the part we care about most at Pecan. Not just building models, but making sure predictions reach the teams who need them, in the tools they already use, quickly enough to make a real difference. If you want to see what that looks like for your data, get started with Pecan.