Predictive analytics gets sold as plug-and-play. The reality is messier.

I’ve watched dozens of mid-market and B2C teams try to roll out predictive projects in the last few years. Some land beautifully. Some stall in month three. The difference almost never comes down to the algorithm. It comes down to a handful of practical problems that nobody warns you about until you’re three months in and your CFO is asking why the model isn’t running yet.

Here are the six challenges of implementing predictive analytics that come up most often, and what actually fixes them.

1. Data quality is worse than you think

Every predictive analytics implementation assumes clean, structured, accessible data. Almost no real-world dataset starts that way.

You’ll find duplicate customer records, missing fields, inconsistent product taxonomies, and a customer ID that means three different things in three different systems. One Salesforce admin I talked to last month spent six weeks just reconciling email addresses across their CRM and their email platform before a model could even run.

The fix isn’t to wait until your data is perfect. It never will be. The fix is to pick a use case narrow enough that you only need a few specific tables to be in good shape, then expand from there. Modern predictive platforms can handle a surprising amount of messiness if the core relationships (customer to transaction, transaction to outcome) are intact.

2. The skills gap is real, and hiring won’t close it

The standard advice is “hire data scientists.” Three problems with that. Data scientists are expensive. They’re hard to find. And once hired, they often get pulled into ad-hoc analysis instead of the predictive work they were brought in for.

Most mid-market companies will never have the in-house data science team they think they need. That’s actually fine. The whole category that’s grown over the past few years (predictive AI agents, AutoML platforms with guardrails, vertical predictive solutions) exists exactly because business teams need predictions without a data science org behind them.

The honest answer for most teams: skip the hire, get a tool that gives Marketing Ops, RevOps, or Customer Success people the ability to build and ship models themselves. That’s the bet Pecan was built around.

3. Picking the wrong use case to start with

This one’s preventable, and yet it happens constantly. A team gets excited about predictive analytics, picks an interesting problem, and discovers six weeks in that:

- The data they need doesn’t actually exist in usable form.

- The “prediction” they want is more of a classification problem.

- The decision the model is supposed to inform happens once a year, so the model can’t show value fast enough.

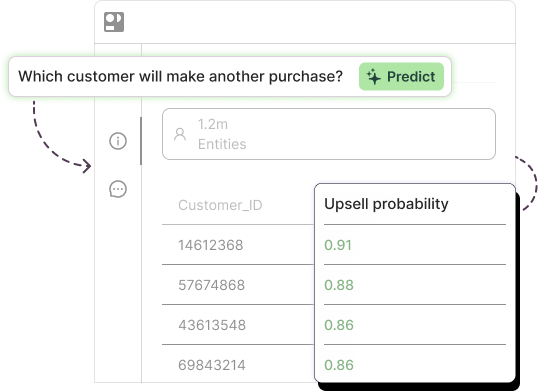

The way to avoid this: before you build anything, write down what action will be taken when the model produces an output. If you can’t name a specific person who will take a specific action based on the prediction, the use case isn’t ready. Move to one where the action is obvious. Churn predictions feed retention plays. Demand forecasts feed reorder decisions. Lead scores feed SDR prioritization. Those are use cases where the path from prediction to action is short.

4. Integration into existing tools

A model that lives in a notebook on someone’s laptop is a science project. A model whose output flows into Salesforce, HubSpot, your data warehouse, or your marketing automation platform is a business asset.

The gap between those two is bigger than most teams plan for. Integration eats engineering time, breaks when upstream systems change, and requires ongoing monitoring. This is where a lot of pilots quietly die. The model works. Nobody can act on it. Six months later, the project is shelved.

What works: pick a tool that ships predictions directly into the systems your team already uses. If your retention team lives in Salesforce, the prediction needs to show up as a field in Salesforce. If your CRM team lives in HubSpot, ditto. The closer the prediction sits to the decision, the more value you’ll capture.

For more on this in practice, our help center has detailed walk-throughs of common integrations.

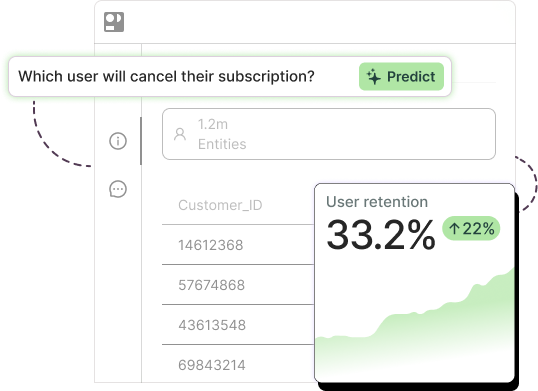

5. The black-box problem

Business teams won’t trust a model they don’t understand. They especially won’t act on it.

If your churn model says “this customer is at risk” but can’t explain why, your retention manager is going to second-guess every prediction. That’s how good models end up unused. Trust is built when the model can show its reasoning: this customer is at risk because their session frequency dropped 60%, their last support ticket was unresolved for nine days, and their product usage shifted away from the core feature.

The shift in the past two years has been toward predictive systems that produce both the prediction and an explanation in plain language. Don’t accept anything less. If you can’t explain a prediction to a non-technical stakeholder in one sentence, the prediction won’t get acted on. That’s one of the underrated risks of predictive analytics: it’s not that the model is wrong, it’s that the model is unintelligible.

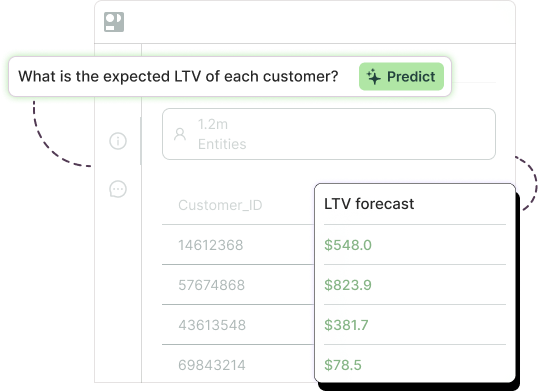

6. Measuring ROI and time-to-value

Predictive analytics projects often run out of patience before they run out of value. Six months in, the executive team starts asking what they got for the investment. If the answer is “we built a model and it’s 87% accurate,” you’re in trouble. Accuracy isn’t ROI.

The fix is to define ROI before you start, in the language of the business. For a churn use case, that’s saved revenue. For a demand model, that’s reduced overstock or fewer stockouts. For lead scoring, that’s higher conversion on a defined cohort. For demand forecasting specifically, this is one of the cleanest use cases to measure: pick a SKU group, compare predicted-vs-actual against last year’s forecasting method, and show the planner-time savings.

Set the baseline first. Measure the lift in the same metric. Tell that story repeatedly to leadership. The teams that succeed with predictive analytics aren’t the ones with the most accurate models. They’re the ones who can defend the ROI in business terms.

Where to start

If you’ve read this far and recognized your own organization in three or four of these, you’re not alone. Most teams hit at least half.

A short, practical sequence that works:

- Pick one use case with a clear action and a clear ROI metric.

- Use a platform that doesn’t require a data science team to operate.

- Get the prediction into the tool the decision-maker already uses.

- Measure lift against a baseline within 90 days.

- Once one use case is winning, expand from there.

For more reading on how to implement predictive analytics in your own org, our resources page has practical guides on each use case, and the Pecan blog covers specific implementations. Or if you want to see a model built on your actual data in 15 minutes, book a demo.