In a nutshell:

- Model deployment is a crucial step often overlooked in transitioning predictive models to production environments.

- Best practices include version control, CI/CD pipelines, and monitoring for seamless integration and performance evaluation.

- Tools like Docker, Kubernetes, and automated ML platforms aid in efficient model deployment.

- Considerations for seamless integration, security, compliance, and maintaining model performance are essential.

- Establish feedback loops, monitor for drift, and handle real-time data to continuously improve and adapt ML models in production.

Deploying machine learning models is the crucial last step that bridges the gap between research and real-world impact. After countless hours of data wrangling, experimentation, and model training, the true test lies in how your model performs on new, unseen data in a production environment.

However, taking a finely-tuned model from your local machine or notebook and putting it into a robust, scalable, and maintainable system is often easier said than done. From data versioning and pipeline monitoring to scaling computational resources and handling updates, data analysts and data scientists face numerous challenges when trying to transition predictive models from development to production environments.

The importance of model deployment can’t be overstated, so we’ll examine the best practices for streamlining the process, explore the tools that aid efficient deployment, and address key aspects of seamless integration and maintaining model performance. By bridging the gap between machine learning and production, data professionals can successfully deploy their models and drive real business impact.

Best Practices for Model Deployment

Model deployment is more than just launching a predictive model in a live environment; it’s a systematic process that requires careful planning, execution, and management to achieve successful outcomes. This section will explore some of the best practices that can aid in effectively deploying your machine-learning models.

Version Control and Reproducibility

Robust version control keeps your evolving machine-learning models consistent and effective over time. As new data arrives and models are retrained, tracking changes to the model’s architecture, parameters, and hyperparameters becomes crucial. This practice not only facilitates reproducibility but also provides a clear audit trail for future reference.

Version control allows you to maintain a historical record of all changes, enabling you to revert to an earlier model if necessary. A well-established version control system like Git becomes a valuable tool in maintaining model integrity and reproducibility.

Continuous Integration and Continuous Deployment (CI/CD) Pipelines

Continuous integration and continuous deployment (CI/CD) pipelines are paramount to efficient ML model deployment. Continuous Integration involves regularly merging code changes into a central repository, after which automated builds and tests are run. This practice helps detect errors more rapidly and reduces manual efforts to integrate changes.

Continuous Deployment takes it a step further by automatically deploying the changes to a production environment once they pass all automated tests. This significantly reduces the time between writing code or developing a model and its deployment, improving the speed and efficiency of your deployment process.

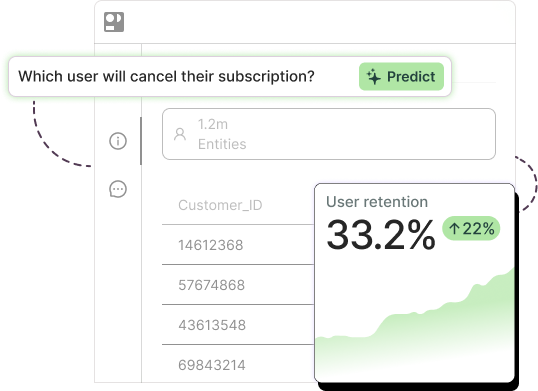

Monitoring and Performance Evaluation

Once your model has been deployed, establish a robust monitoring system to gauge its performance regularly. This involves tracking key performance indicators (KPIs) relevant to your specific use case or business objectives.

Having real-time monitoring and alert systems in place can help you detect any issues or anomalies in the model’s performance, allowing for swift corrective actions. Don’t forget to evaluate your model’s performance on a regular basis; if a once high-performing model starts to degrade, it might be a sign that the model needs to be retrained with new data.

Photo by Cole Marshall on Unsplash

Tools for Efficient Model Deployment

A variety of tools have emerged that make the model deployment process more manageable and effective. Let’s look at some of them in detail.

Docker and Containerization

Docker has become an indispensable tool in the world of ML deployment. It is a platform that enables you to pack your application and its dependencies into a “container,” which can then be run on any system that supports Docker. This ensures that your application will run the same, no matter where it’s deployed. This uniformity dramatically simplifies deployment and eliminates the “it works on my machine” problem.

Docker’s usefulness extends beyond applications to machine learning model deployment. Containerizing your model with its dependencies eliminates challenges related to dependency management and version control. This streamlines scaling and distributing your models across different systems, promoting consistency and reproducibility.

Moreover, Docker containers are lightweight, requiring fewer resources compared to full-fledged virtual machines. This makes them an efficient choice for deploying resource-intensive machine learning models.

Kubernetes for Orchestration

Kubernetes is an open-source platform that automates the process of managing, scaling, and deploying containerized applications. It facilitates both declarative configuration and automation, which are vital for scalable applications.

In the context of ML model deployment, Kubernetes can efficiently manage your models’ lifecycle and scalability. For example, it can handle rolling updates and rollbacks, meaning that you can update your model or take corrective actions with minimal intervention and zero downtime. The Kubernetes architecture also allows for services to be exposed internally within your cluster or externally, creating flexibility in how your models are accessed and used.

A powerful feature of Kubernetes is its ability to autoscale applications based on resource usage, such as CPU cycles, memory, or custom metrics defined by the user. Kubernetes intelligently decides when to provide more resources or scale back, ensuring optimal utilization for workloads of any size.

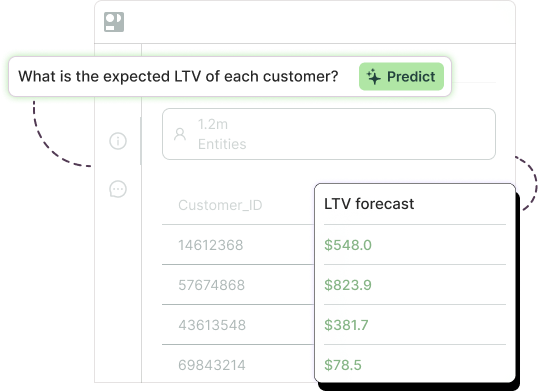

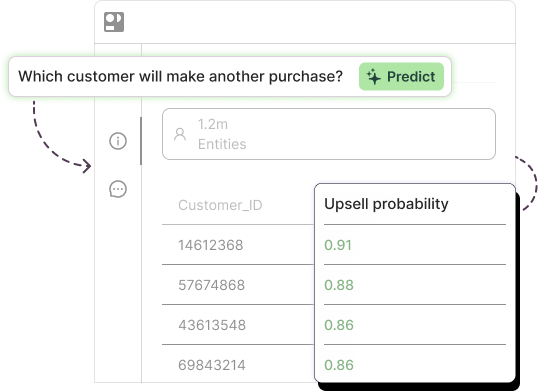

Automated Machine Learning Platforms’ Pre-built Connectors

Automated machine learning platforms simplify deployment with pre-built connectors. These connectors seamlessly integrate your models with databases, applications, and APIs, streamlining the process and maximizing business impact. Essentially, these platforms go beyond model deployment and act as a complete suite for the entire machine-learning pipeline.

Extensive toolkits within these platforms address a wide range of deployment needs. They handle everything from data preprocessing to feature engineering and selection and hyperparameter tuning. Additionally, cross-validation functionality is often included, which helps guarantee the model’s robustness and accuracy across different data subsets.

Furthermore, automated machine-learning platforms may offer interpretable machine-learning functionalities. This allows for understanding model decisions, a critical aspect for industries requiring explainability, such as healthcare and finance.

Ensuring Seamless Integration in Production Environments

A machine learning model’s success hinges on its ability to work within your existing infrastructure. The most powerful platforms become useless if they can’t integrate smoothly. Here are some considerations during the building process to achieve seamless integration:

Integration with Existing IT Infrastructure

As discussed in the intro, your ML models must integrate seamlessly with your existing IT infrastructure. Compatibility checks, interface development, and thorough testing are fundamental steps to achieving this smooth integration. If the model can’t work within your existing systems, it will struggle to deliver value.

Integrating machine learning models with existing IT infrastructure requires careful evaluation. Here’s why:

- Performance vs. Capacity: Your model’s performance needs must be weighed against your current infrastructure’s capabilities. Upgrades to hardware like processing power, storage, memory, or network might be needed to handle the model’s demands effectively.

- Compatibility Concerns: Compatibility issues can arise if your model needs to interact with specific software, device drivers, or system components. Verifying compatibility with existing versions used in your infrastructure is crucial to avoid integration problems.

- Scalability for Growth: As your model usage grows, your IT infrastructure should be able to scale accordingly. Consider implementing cloud solutions or other scalable IT systems to accommodate this potential increase.

By proactively addressing these potential challenges, you can ensure a smoother integration process for your machine-learning models.

Security and Compliance Considerations

Security and data privacy are an imperative part of all IT work, including machine learning. Ensure that your ML models comply with all relevant regulations, both within the company and the law. These might include GDPR, HIPAA, or other data protection laws. Additionally, your models should have robust security protocols to prevent unauthorized access or data breaches.

To maintain security, your models should be designed to only access the data they need, with stricter access controls built around sensitive data. Encryption should be applied both for data at rest and data in transit to further protect against potential breaches.

Always consider third-party risk. If your model deployment involves external vendors or cloud services, these entities should comply with the same security standards and regulatory requirements your organization adheres to. (Read more about Pecan’s emphasis on security.)

In addition, automating compliance checks throughout the model development and deployment process can help identify and fix potential issues before they become larger problems. This could involve running automated scripts or using machine learning to predict possible compliance issues based on past data.

Equipping your data team with continuous security and compliance training empowers them to make informed decisions during the model deployment process. Regular training keeps them up-to-speed on the latest data protection laws and technical safeguards, minimizing risks and ensuring responsible deployment practices.

Maintaining Model Performance in Production

The initial creation, testing, and deployment of your machine-learning model are just the beginning. Maintaining that level of performance requires ongoing attention. Here are some important considerations to keep your models running smoothly in the long term.

Model Versioning and Rollback Strategies

Newer doesn’t always mean better when it comes to models. It’s possible that an updated model might underperform compared to its predecessor. Hence, having a rollback strategy and maintaining different versions of models can be majorly beneficial, ensuring swift reversion to a superior model version if needed.

Implementing effective model versioning involves using version control systems that can record changes to your models over time. This means that with every update or improvement to a model, a new version is created while the older versions are also preserved. This version history can be extremely valuable, especially when updates do not deliver the expected improvements and you need a recovery mechanism.

Another part of the rollback strategy is being able to identify when an older version of your model becomes more effective than the newer version. Set up a robust monitoring system that can track the model’s performance on an ongoing basis and quickly flag if a newly updated model is underperforming.

The Importance of Model Monitoring and Watching for Drift

Model drift refers to the situation where the model’s performance degrades over time due to changes in the underlying data. The concept is closely tied to the dynamic nature of real-world data. As our world is constantly evolving, so is the data the models are based on. Factors like customer behaviors, market tendencies, or even climate patterns might shift over time, and a model trained on older data might lose relevancy and its predictive power.

Deployment is just the beginning of a machine-learning model. For continued accuracy, ongoing monitoring and updates are essential. This means regularly checking for data drift, which occurs when the underlying data patterns shift over time. By retraining the model with fresh data, you can maintain its optimal performance and guarantee its predictions remain reliable.

Feedback Loops and Model Retraining

Establishing effective feedback loops is a big part of continuous improvement. The performance data of your models should be used to retrain and refine them, leading to better performance over time.

Implementing a reliable feedback system involves setting up mechanisms that allow direct interaction with users. User feedback is necessary for identifying issues or areas of improvement that might be invisible through performance metrics alone. Engagement metrics such as user satisfaction scores, time spent using the model, and other behavioral feedback can provide valuable insight into how to enhance the model’s effectiveness.

To collect this data, consider implementing A/B testing scenarios to directly compare the effectiveness of different model versions. This way, you can actively incorporate user feedback into your model retraining processes, allowing your models to evolve continuously and better meet user needs.

Bridge the ML Gap Today

Implementing effective ML model deployment is about more than just getting your models into production. It involves numerous factors, from the initial planning, creating, and testing to the challenges of integrating models to existing systems and the many ways you’ll have to continually improve and maintain the model and its performance over time. With these practices, tools, and strategies in place, you can streamline the transition from development to production and deliver real business value.

Ready for an automated, simpler approach to machine learning model deployment? See how Pecan can make the entire process of implementing machine learning far easier for your team. Get a tour today.