TL;DR

Rule-based AI uses fixed if-then logic set by humans. Machine learning learns patterns from data and improves over time. In 2026, most successful organizations aren’t picking one or the other. They’re combining both: ML handles prediction and pattern recognition, while rules enforce guardrails, compliance, and business logic. The result? Faster decisions, fewer blind spots, and predictions you can actually trust.

What is rule-based AI?

Rule-based AI is a type of artificial intelligence that makes decisions using predefined if-then logic programmed by human experts. These systems follow explicit rules to process inputs and produce outputs, making them transparent and easy to audit, but limited in their ability to adapt to new patterns or complex, shifting data.

Think of it like a recipe. If ingredient A is present and condition B is met, do C. A bank might flag any overseas transaction over $5,000 as potentially fraudulent. A marketing team might define “high-value lead” as anyone who visited the pricing page twice and works at a company with 500+ employees. Clear rules, clear outcomes.

And honestly, for a lot of scenarios, that works pretty well. Where things get tricky is when the real world stops following the recipe.

What is machine learning?

Machine learning is a branch of AI where systems learn patterns from data rather than following hardcoded instructions. ML algorithms analyze large datasets, identify relationships between variables, and continuously improve their accuracy as they’re exposed to more information. This makes them well-suited for complex, dynamic environments where conditions change frequently.

Instead of a human writing out every possible scenario, an ML model trains itself on historical data and discovers the signals that actually matter. Maybe it’s not just the pricing page visits that predict conversion. Maybe it’s a combination of email opens, time spent on the integrations page, and whether they came from an organic search. An ML model picks up on those subtleties without anyone having to spell them out in advance. And it keeps getting sharper.

If you’re new to how this works in a business context, Pecan’s guide on how to build a predictive analytics model is a solid starting point.

Rule-based ai vs. machine learning: quick comparison

| Feature | Rule-Based AI | Machine Learning |

| How it works | Predefined if-then logic | Learns patterns from data |

| Adaptability | Static, requires manual updates | Dynamic, improves over time |

| Handles complexity | Struggles with nuanced scenarios | Thrives on complex, multi-variable data |

| Setup time | Fast for simple use cases | Longer initial training, but scales better |

| Transparency | High (rules are explicit) | Varies, though explainability tools are improving |

| Data requirement | Minimal | Needs quality historical data |

| Maintenance | Manual rule updates as conditions change | Automated retraining and monitoring |

| Best for | Compliance checks, simple routing, known logic | Predictions, personalization, anomaly detection |

| Scalability | Breaks down as rules multiply | Handles millions of data points and variables |

| Bias risk | Baked-in human assumptions | Can surface hidden patterns but needs monitoring |

Pros and cons: a closer look

Rule-based ai

What it does well:

Simple rule-based systems are cheap to build and quick to deploy. You don’t need massive datasets or specialized infrastructure. The logic is transparent, which matters a lot in regulated industries where you need to explain exactly why a decision was made. If an auditor asks why a transaction was flagged, you can point to the rule.

They also give you direct control. Every decision maps to a rule that a human wrote. There’s no guessing about what the system “thinks.”

Where it falls short:

Rules are rigid. Customer behavior shifts, markets evolve, new patterns emerge, and your rule set just… sits there. Unless someone manually updates it, anyway. And once you’ve got hundreds (or thousands) of rules, maintaining them becomes its own full-time job. There’s also a real ceiling on what rules can capture. Human experts can only encode so many variables into if-then statements. In practice, that means rule-based systems tend to miss the subtle signals that actually differentiate high-value customers from everyone else.

One marketer on Reddit described their experience with rule-based lead scoring this way: they set it up, it worked for a quarter, and then the market shifted and the rules stopped reflecting reality. They were scoring leads based on criteria that no longer mattered.

Machine learning

What it does well:

ML excels at finding patterns humans would never think to look for. It can process thousands of variables simultaneously and identify non-obvious correlations across massive datasets. It adapts. It improves. And for predictive use cases like churn modeling,lead scoring, and demand forecasting, the accuracy gap between ML and rules isn’t small. We’re talking 20-40% better forecast accuracy in retail settings, and meaningful improvements in conversion rates for B2B teams using predictive lead scoring.

Where it falls short:

ML needs data, and it needs decent data. If your historical records are patchy or your CRM is a mess (and let’s be real, whose isn’t?), you’ll need to invest in data preparation before models can deliver reliable results.

There’s also the “black box” concern, where it can be hard to explain exactly why a model scored one lead higher than another. That said, explainability has improved dramatically. Modern platforms now provide confidence scores and feature importance breakdowns alongside every prediction. It’s not the opaque mystery it used to be.

The 2026 reality: it’s not either/or anymore

Here’s what’s actually happening in practice right now. The rule-based vs. ML debate has largely resolved itself, and the answer is “both.”

The Machine Learning Week conference literally rebranded to “HYBRID AI 2026” for its 18th annual event. That should tell you something about where the industry consensus has landed.

How hybrid ai works in practice

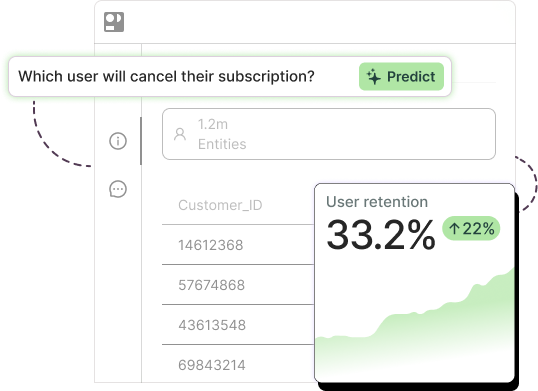

The dominant pattern looks like this: machine learning handles the prediction and pattern recognition layer, while rule-based logic enforces the guardrails. ML says “this customer has a 78% likelihood of churning.” Rules say “if churn probability exceeds 70% AND the customer’s lifetime value is in the top 20%, trigger a personalized retention offer through Salesforce.”

Neither piece works as well alone. ML without rules can produce predictions that nobody acts on, or worse, that trigger inappropriate actions. Rules without ML are operating on assumptions that may have been accurate six months ago but have since drifted.

Agentic ai: the next layer

And then there’s the newest piece of the puzzle. Agentic AI systems, which can autonomously plan and execute multi-step workflows, are built on this same hybrid foundation. The ML layer powers reasoning and planning. The rule-based layer defines action limits, escalation paths, and human checkpoints.

The agentic AI market was valued at $7.8 billion in 2025 and is projected to exceed $52 billion by 2030. Gartner expects 40% of enterprise applications to embed AI agents by the end of 2026. That’s up from less than 5% just a year earlier.

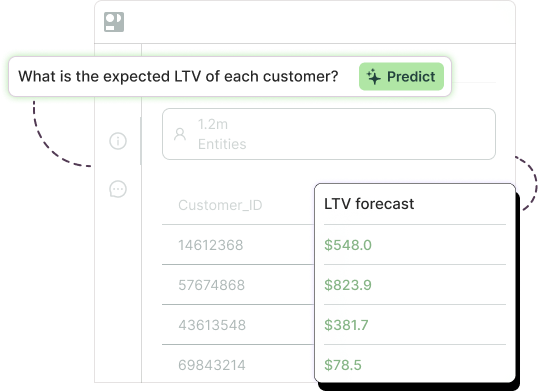

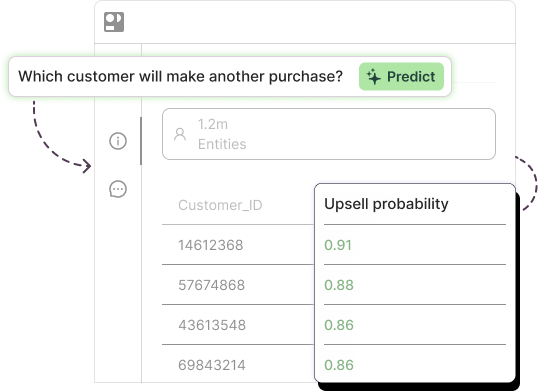

Pecan’s own Predictive AI Agent is a good example of this in action. It translates a plain-English business question into a full predictive workflow, building and validating models automatically, while built-in guardrails prevent common pitfalls like data leakage and overfitting. The hybrid engine does the heavy lifting so business teams can focus on what the predictions actually mean for their next move.

Neuro-symbolic AI

Worth mentioning briefly: there’s growing momentum around neuro-symbolic AI, which formally integrates neural networks (the learning part) with symbolic reasoning (the logic part) into unified architectures. Gartner added it to their 2025 Hype Cycle, and the World Economic Forum highlighted it as a pathway to AI systems that can reason logically without hallucinating. It’s still early, but it signals that the blending of rules and learning is becoming a fundamental architectural principle, not just a deployment strategy.

Industry-specific recommendations

Different industries sit at different points on the rules-to-ML spectrum. Here’s where things stand in 2026.

Retail and ecommerce

The shift away from pure rules has been dramatic here. AI in retail is projected to grow from $14.24 billion in 2025 to over $96 billion by 2030. And most of that growth is driven by predictive capabilities, not static rule engines.

For demand forecasting, ML consistently delivers 20-40% better accuracy than rule-based methods. That translates directly into fewer stockouts, less overstock, and healthier margins. Across Pecan’s customer deployments, planning teams have seen roughly a 15% reduction in overstock and around an 8% reduction in inventory costs. If you’re still forecasting with spreadsheets and reorder-point rules, take a look at how AI demand forecasting scales with automation.

What to use when: Rules for supplier lead times, minimum order quantities, and warehouse constraints. ML for demand prediction, customer segmentation, and personalized recommendations.

SaaS and B2B

Lead scoring is the clearest use case here. Traditional rule-based scoring (assigning points for job title, company size, page visits) gets you maybe 60% of the way. Predictive ML scoring looks at the full picture, hundreds of behavioral and firmographic signals, and finds the patterns that actually correlate with closed deals.

Teams using predictive lead scoring report roughly a 30% improvement in campaign ROI.

For churn prediction, there’s an interesting staging effect. Early-stage SaaS companies (under 1,000 customers) can get 80% of the value from simple rule-based scorecards. Once you’re past that threshold and have 12+ months of clean data, ML becomes significantly more accurate. The smartest approach is to start with rules and layer in ML as your data matures.

What to use when: Rules for lead routing, SLA enforcement, and basic qualification filters. ML for predictive scoring, churn risk assessment, and customer lifetime value modeling.

Financial services

Financial services holds the largest share of enterprise AI spending at roughly 24% of the market. And fraud detection is the textbook example of hybrid AI done right.

Pure rule-based fraud detection (flag transactions over $10,000 or from blacklisted IPs) catches the obvious stuff but misses sophisticated patterns. Modern ML ensemble models achieve F1 scores above 94%, and newer approaches using clustering-augmented techniques push into the 99%+ range. But the rules aren’t going away. Regulatory requirements demand explainability, and rules provide the auditable paper trail. So in practice, rules handle the first-pass screening while ML catches what rules miss.

Credit scoring is following the same path. Hybrid approaches achieve comparable performance to full-feature models while using roughly 88% fewer input variables, which matters when regulators want to understand every factor.

What to use when: Rules for regulatory compliance, KYC checks, and audit trails. ML for fraud detection, credit risk scoring, and customer behavior analysis.

Healthcare

Healthcare AI has exploded. The FDA had authorized over 1,250 AI-enabled medical devices as of mid-2025, up from around 950 just a year prior. Clinical decision support has evolved from mostly rule-based logic (pre-2010) through ML-enabled diagnostics to the current era of foundation model integration.

But healthcare also faces intense regulatory scrutiny, with 250+ AI bills across 34+ states in the US alone. That makes the hybrid approach especially important: ML for the analytical heavy lifting, rules for safety guardrails and regulatory compliance.

What to use when: Rules for clinical protocol enforcement, dosage limits, and compliance workflows. ML for diagnostic support, patient risk stratification, and resource optimization.

So which should you choose?

If you’ve read this far, you probably already know the answer isn’t “one or the other.” But let me try to make the decision framework practical.

Start with rules if your use case has well-defined, stable logic that doesn’t change often. Compliance checks, data validation, simple routing, notification triggers. If the decision tree has fewer than, say, 20 branches and the conditions don’t shift quarter to quarter, rules will serve you fine.

Move to ML when you need to predict outcomes, personalize at scale, or make sense of complex, multi-variable relationships that change over time. Churn prediction, demand forecasting, lead scoring, customer lifetime value, anomaly detection. These are ML’s sweet spot.

Combine both when you need predictions AND accountability. Which, in most business contexts, is pretty much always. Let ML surface the insights. Let rules turn those insights into governed, repeatable actions.

The most common mistake teams make? Staying on rules too long because “they’re working fine.” Rules don’t show you what you’re missing. You can’t measure the leads you didn’t score correctly or the churn you didn’t catch. It’s the business equivalent of driving with the headlights off and claiming you’re fine because you haven’t hit anything yet.

For a deeper look at the gap between backward-looking analytics and forward-looking prediction, check out BI vs. Predictive Analytics: Battle of the Brains.

Where pecan fits in

Pecan’s Predictive AI Agent was built for exactly this hybrid world. You ask a business question in plain English. The agent handles data preparation, feature engineering, model building, and validation automatically. Predictions flow directly into the tools your team already uses, whether that’s Salesforce, HubSpot, or your data warehouse.

No coding. No waiting weeks for a data science team. No black boxes.

Across customer deployments, Pecan has delivered roughly 12% average churn reduction, around 10% improvement in customer lifetime value, and predictive models reaching production up to 32x faster than traditional approaches.

If you’ve been relying on rules and wondering whether there’s something better, or if you’ve been burned by ML tools that were too complex to actually use, this is a good place to start exploring.

Frequently asked questions

What’s the main difference between rule-based AI and machine learning?

Rule-based AI follows predefined if-then logic written by human experts. Machine learning learns patterns directly from data, adapting and improving its accuracy over time without being explicitly programmed for each scenario. The core difference: rules are static, ML is dynamic.

Can rule-based AI and machine learning be used together?

Yes, and in 2026, that’s the standard approach. Most enterprise teams combine ML for prediction and pattern recognition with rule-based logic for compliance, guardrails, and business workflow automation. This hybrid model gives you both adaptability and control.

When is rule-based AI better than machine learning?

Rule-based AI works well for straightforward, stable decision logic where transparency and auditability are critical, like regulatory compliance checks, basic data validation, or simple if-then routing. If the conditions rarely change and the decision tree is small, rules can be faster and cheaper to implement.

What are the limitations of rule-based AI?

Rule-based systems can’t adapt to changing conditions without manual updates. They struggle with ambiguity, can’t uncover hidden patterns in data, and become extremely difficult to maintain as the number of rules grows. They also embed human assumptions that may carry bias.

What is hybrid AI?

Hybrid AI combines multiple AI approaches, typically pairing machine learning (for learning and prediction) with rule-based systems (for logic and constraints). It can also include neuro-symbolic architectures that formally integrate neural networks with symbolic reasoning. The goal is to get the adaptability of ML together with the transparency and control of rules.

How does Pecan handle the rule-based vs. ML tradeoff?

Pecan’s Predictive AI Agent automates the full predictive workflow, from data preparation through model validation, using machine learning. Built-in guardrails (effectively, rules) prevent common issues like data leakage and overfitting. This means business teams get accurate, validated predictions without needing to manage the underlying complexity. Predictions are delivered directly into existing business systems where teams can act on them.