In a nutshell:

- Data flattening is crucial for preparing data for predictive analytics modeling. It transforms nested data into a flat, tabular format.

- Challenges of nested data structures include complexity, data manipulation skills required, and potential impact on model accuracy.

- Techniques for flattening data include using tools like the Pandas library and SQL queries.

- Best practices for data flattening involve data cleaning, handling missing values, and leveraging automated platforms like Pecan AI.

- Data flattening improves model performance, efficiency in feature engineering, and allows for more accurate analysis in predictive analytics projects.

Flattening your data might sound like transforming a rich, multidimensional, intriguing collection of information into a boring, smushed, and homogenous single layer. But while it sounds dull and sad, data flattening is actually a crucial step in preparing data for predictive analytics modeling.

When you flatten your data, you transform complex, nested data structures into a flat, tabular format suitable for machine learning algorithms. It’s wise to grasp the challenges posed by nested data structures fully. You'll also want to learn techniques for flattening data using tools like the pandas library and SQL queries, the best practices for data flattening, and the impact of data flattening on predictive analytics success.

Understanding and mastering data flattening is essential for data analysts and data scientists who are looking to improve model performance and accuracy in predictive analytics projects.

Understanding Nested Data Structures

Nested data structures, also known as hierarchical data structures, are data sets where the data fields are themselves data sets. In simpler terms, this means that some data fields contain other data fields within them.

Imagine you have a database of customers, and each customer record includes a list of orders. Each order might contain multiple products. This complex structure is what we refer to as nested data.

Potential Challenges Posed by Nested Data in Predictive Analytics

Nested data structures pose several challenges in predictive analytics. Most machine learning algorithms and statistical models are designed to work with flat, tabular data structures instead of nested ones. Therefore, using nested data requires extensive preprocessing and transformation to convert it into a usable format.

Nested data structures are also often more complex and harder to understand, making it more difficult to extract meaningful insights for predictive modeling. They require a higher level of data manipulation skills to handle, which can slow down the overall analytics process and increase the chance of errors.

Finally, nested data can contain multiple levels of hierarchy, leading to intricacies in the relationships between variables and potential difficulties in determining causality. This complexity can influence the accuracy and interpretability of predictive analytic models, ultimately affecting their performance.

Techniques for Flattening Data

Once you understand the complexity and challenges posed by nested data structures, you can begin to explore the various techniques used to flatten data for predictive analytics. Data scientists mainly use two tools for data flattening: the pandas library and SQL queries. Both of these methods will be explained and illustrated with examples.

Using the Pandas Library for Data Flattening

Pandas is an open-source data manipulation library created for the Python programming language. It includes various built-in functions for data transformation, including a method to flatten nested data structures.

Want to learn how to use pandas for this task? Check out this tutorial:

Using SQL Queries for Data Flattening

SQL queries are another approach used to flatten data, especially when working with relational databases.

SQL does not directly support flattening nested data, as it assumes the data is already in a tabular format. However, it is possible to flatten nested data by using a series of JOIN operations.

Suppose we have a database with two tables, Customers and Orders, where each customer can have multiple orders. We can flatten this data using a JOIN operation:

SELECT Customers.name, Customers.age, Orders.product, Orders.quantity, Orders.price

FROM Customers

JOIN Orders ON Customers.id = Orders.customer_id

This SQL query will output a flat, tabular dataset in which each row represents a single order and includes information about the customer who made the order.

By using these techniques, nested data can be transformed into a flat format that is easier to analyze and manipulate and is suitable for input into predictive analytics algorithms.

Best Practices for Data Flattening

The three best practices for data flattening include data cleaning and preprocessing, handling missing or null values, and leveraging automated platforms like Pecan AI that streamline the data flattening process.

Data Cleaning and Preprocessing

The first step before flattening your data is to clean it and preprocess it. This involves ensuring that the data is accurate, checking for and resolving inconsistencies, dealing with missing or null values, and encoding categorical variables.

You may want to normalize or standardize numerical data to create a consistent scale, especially when working with machine learning algorithms that are sensitive to the scale of the input data. It may also be necessary to derive new features from the existing ones to enrich the dataset and enhance the performance of the model.

Handling Missing or Null Values During Flattening

When flattening nested data, it's not uncommon to encounter missing or null values. These can occur for a range of reasons, like an error during data collection or when a particular attribute is not applicable to a certain observation.

Before proceeding with flattening, you should decide how to handle these missing or null values, as they can negatively affect the performance of your predictive analytics model. Several techniques are available, including imputation (replacing missing values with a calculated value), deletion (removing observations or variables with missing values), or even encoding missing values as a separate category or unique value.

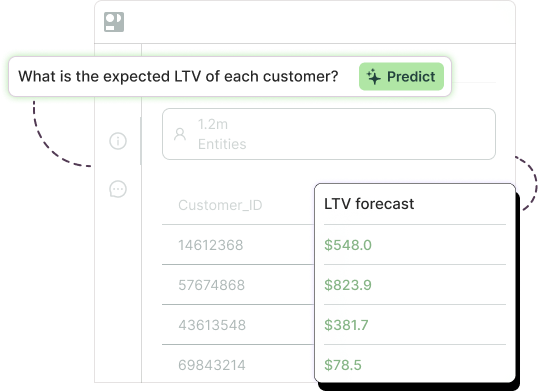

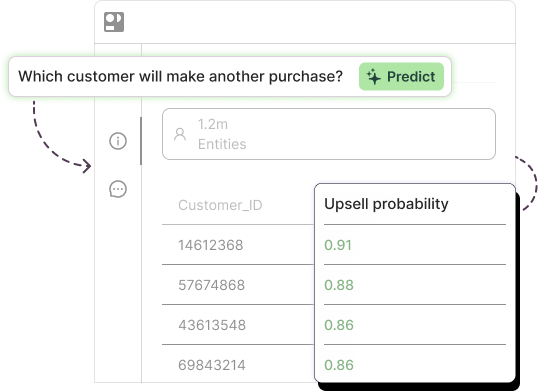

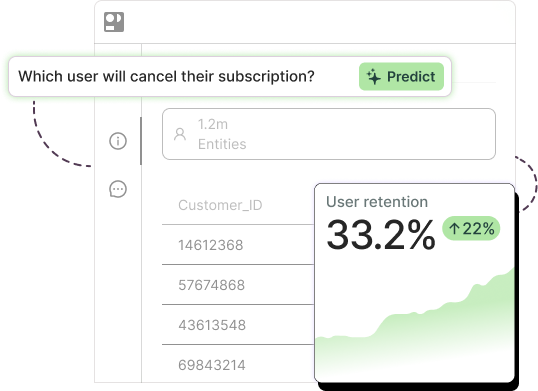

Using Automated Platforms like Pecan AI

Automated platforms like Pecan AI offer a comprehensive solution to data flattening, cleaning, and preparation. These platforms leverage advanced algorithms and automation to streamline the entire process, reducing the time and effort required from you. They ensure that your data is in the right format and quality for predictive analytics, allowing you to focus on the actual analysis and interpretation of the results.

Pecan AI, for instance, automatically handles the flattening of nested data and deals with missing values, outliers, and anomalies. It also performs necessary transformations on your data and builds predictive models without requiring any coding from your side. This makes it an invaluable tool for data analysts and scientists who want to optimize their data preparation process and ensure their predictive analytics projects' success.

Following best practices in data flattening is just as significant as understanding the techniques to apply. By ensuring proper data cleaning, handling null values effectively, and considering automation, you enhance your predictive model's performance and reliability.

How Data Flattening Impacts Predictive Analytics

Without a doubt, predictive analytics, with its power to predict future outcomes based on historical data patterns, marks a transformative shift in data-driven decision-making. However, the success of predictive analytics hinges on the quality and structure of the data at hand. The data needs to be in a format that predictive algorithms can easily comprehend and learn from, which is why data flattening is so valuable.

Improved Model Performance and Accuracy

The primary contribution of data flattening to predictive analytics is the significant enhancement of model performance and accuracy. Most predictive algorithms require a flat, tabular format as input and struggle to process nested data structures effectively.

Put simply, flattening your data transforms it into a format that predictive algorithms can easily digest and learn patterns from. A well-structured dataset allows your predictive models to accurately capture the relationships and dependencies between various data points, resulting in improved predictive performance.

By flattening your data, you're creating a leveled playing field where each variable gets an equal opportunity to influence the model. This ensures that the inherent biases of nested data structures don't skew your model's predictions.

Efficiency in Feature Engineering and Model Training

Data flattening also increases efficiency in feature engineering and model training. Feature engineering is the process of creating new features from existing data that a predictive model can use to improve its performance.

However, feature engineering can be a complex task when dealing with nested data structures due to their hierarchal nature and potential redundancy. Flattened data, by contrast, simplifies this task by presenting the data in an easily digestible and manipulatable format.

Additionally, training a machine learning model on a nested dataset can be time-consuming and computationally intensive due to the additional processing required to handle the complexity of the data structure. Flattened data, on the other hand, is simpler and more efficient to process, which can significantly speed up the training time for your predictive models.

Data flattening is a key step in preparing your data for predictive analytics. It enhances model performance, improves the efficiency of feature engineering and model training, and allows for a more accurate and comprehensive analysis of your data. By understanding and mastering this process, you can significantly elevate the success of your predictive analytics endeavors.

Challenges and Pitfalls in Data Flattening

While data flattening is an essential process for predictive analytics, it still faces certain challenges and pitfalls. There are several obstacles to consider, especially when dealing with large and complex nested data structures.

Dealing with Large and Complex Nested Data Structures

One of the primary challenges in data flattening is the complexity and size of the nested data structures. Deeply nested data means more transformations and processing to flatten the data, which can be time-consuming and computationally intensive. These nested structures could also contain redundant data, making the data flattening process more complex and increasing the risk of errors.

Handling large data sets also requires significant computational resources and storage space. This can be a limiting factor for many organizations, especially those with limited IT resources.

Performance Considerations in Data Flattening Processes

Another challenge with data flattening is the potential impact on performance. When dealing with large data sets, the process of flattening can be computationally intensive and slow, especially on systems with limited resources.

The effectiveness of the algorithm used for flattening can also affect the performance of the flattening process. A poorly designed or inefficient algorithm will increase the processing time and can result in incorrect or incomplete data flattening. Therefore, it's crucial to ensure that the algorithm used for data flattening is efficient and reliable.

Bottom Line

Data flattening is an essential process for preparing data for predictive analytics. It allows you to transform complex nested data into a flat, tabular format more suitable for machine learning algorithms. This process can significantly improve the performance and accuracy of your predictive models and streamline the overall analytics process.

However, it is not without its challenges. Dealing with large and complex nested data structures requires significant data manipulation skills and computational resources. There are also performance considerations to keep in mind, as the data flattening process can be computationally intensive.

Despite these challenges, the benefits of data flattening far outweigh the potential pitfalls. Therefore, it is critical for data analysts and data scientists to master this process. By understanding and overcoming the challenges associated with data flattening, you can maximize the success of your predictive analytics projects.

Remember, predictive analytics relies heavily on the quality of the input data. Ensuring that your data is well-structured and prepared will significantly enhance the effectiveness of your predictive models, leading to more accurate predictions and better decision-making.

Ready to leave manual data flattening behind and try an automated approach to building predictive models? Jump into Pecan now with a free trial.