In a nutshell:

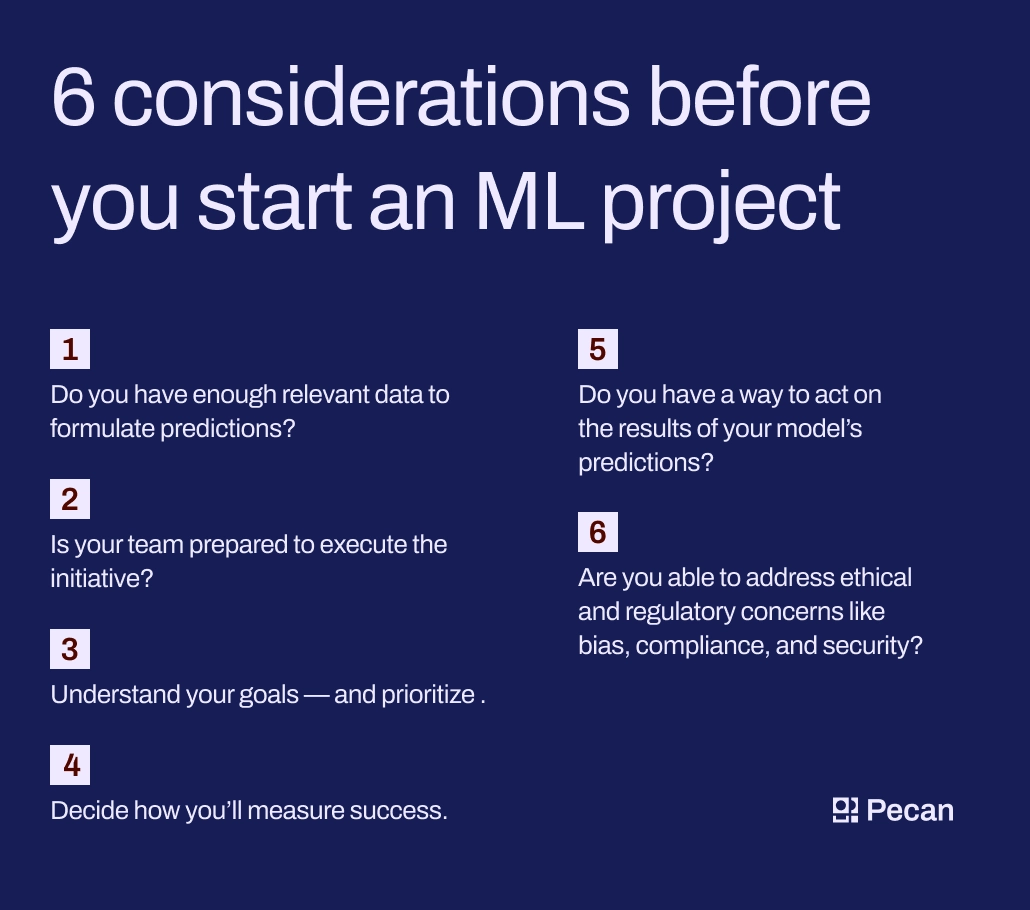

- Before starting a machine learning project, ensure you have enough relevant data and a prepared team.

- Understand your goals and prioritize them to achieve success.

- Measure success using relevant KPIs and have a plan to act on the results.

- Address ethical and regulatory concerns like bias, compliance, and security.

If you’re looking to elevate your data analytics, the chances are high that you’re looking into AI-powered analytics tools, but once your new predictive platform is up and running, how do you know which machine learning use cases will actually make a business impact?

Uncertainty is part of the deal when exploring data and uncovering novel solutions, but thankfully, you don’t need to feel completely in the dark. Before you kick off your next machine learning initiative, here are six questions to ask yourself to ensure your use case has merit.

Explore these considerations to make sure your machine learning project will succeed.

1. Do you have enough relevant data to formulate predictions?

One very important box to check before kicking off your initiative is that your organization has enough data to inform the model. The history of your data plays a major role in the quality of the output you can derive and, ultimately, the success of your project.

A good rule of thumb is that you need data from as far back as you’d like your model to predict forward. If you want to know what happens in two years, you’ll need data reaching at least two years back. To create a strong predictive model using a low-code platform like Pecan, we recommend providing at least a year of historical data to inform the model. The richer your data, the better.

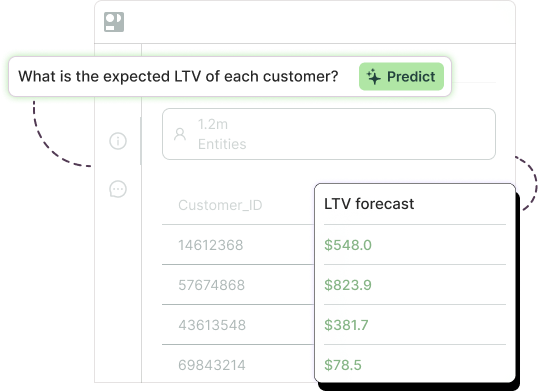

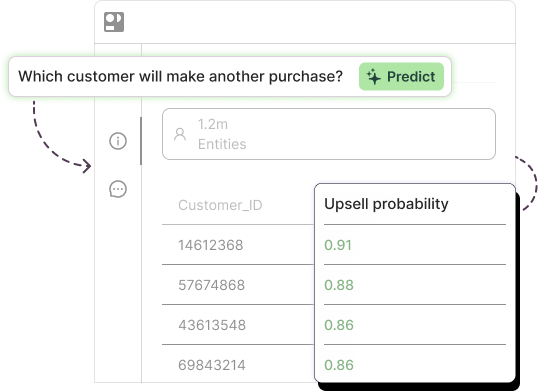

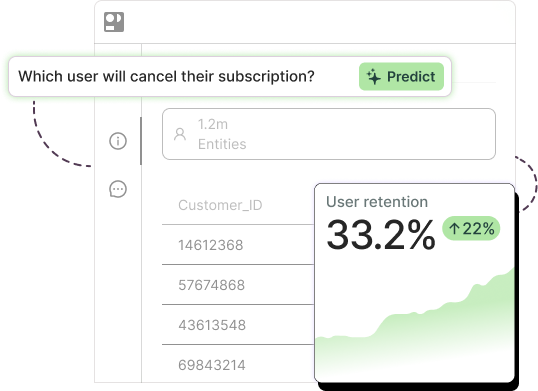

Pecan is a low-code Predictive GenAI platform that helps you streamline the entire data science process, from automating prep and blend to selecting a business problem, choosing and building features, and generating SQL-based models.

For many tools, you’ll need to ensure that your data is high quality. If you spent years poorly and haphazardly tracking variables, you won’t be able to plug that into your model. However, Pecan can manage data of any quality — and help you fully automate your data preparation process.

2. Is your team prepared to execute the initiative?

While a low-code machine learning model will help relieve many of the manual parts of your initiative, you’ll still need a team ready to develop and support the model and use case. Your team will need to be ready to provide three things to ensure a smooth initiative: time, expertise, and cultural openness.

Time

Identifying what you want your model to do, learning how to use your modeling tool, creating the prompts, and preparing and inputting data all take time. While this varies depending on what tool you’re using, you can expect to have to pencil in some time for your teams to get the use case off the ground.

If you’re using Pecan, you can expect a low level of time investment. Predictive GenAI makes the process extremely intuitive and is built to save you and your team time on model creation and tool training.

Expertise

As is the case for time investment, different tools will require different expertise levels. For example, various steps of the data science stage can require in-depth industry knowledge to execute properly, and constructing your model tuned to your organization’s needs can also take significant time. And, of course, if you’re building an in-house custom tool, the required expertise is significant.

Pecan is designed to require very low levels of coding. Model creation occurs through a natural-language conversation, so even if you don’t even know how to phrase your inquiry, Pecan will help you isolate a business problem and choose the right model through dialogue. Pecan also does your feature engineering for you, relieving another call for expertise.

Cultural openness

A final indicator of team preparation is cultural openness. AI is a hot-button topic, and people have diverse opinions, but for your team’s use case to thrive, team members need to be open and willing to engage with your model and its results.

Cultural openness doesn’t just extend to model development. You can’t implement the model’s conclusions without a team ready to take a new approach. Trusting the outputs of a tool low-code tool can feel daunting if the solution doesn’t reveal what’s happening behind the scenes. You’ll want to make sure you have processes in place to support the transition to AI within your company, including,

- Leading collaborative sessions for team members to ask questions

- Pairing individuals who are comfortable with AI with those who are more resistant

- Measuring the impact of your AI tool thoroughly over time so skeptics can see the impact in logical terms

3. Understand your goals — and prioritize

With these prerequisites checked off, it’s time to decide which use cases you’ll take on. A key foundation for achieving success is defining success, and this starts with identifying your goals. Every department that requests help with a data science initiative will have different goals. Some organizations want to lower churn rates, others may want to shrink overstock, and others will want to develop new products.

Each goal will require different time investments, so it’s vital that you and your team identify your biggest priorities. Often, these priorities will be a mix of your organization’s overarching goals and the estimated ROI of the use case. Some use cases may have immediate business value, while others may take years to pay off.

Experiment with abandon

Once you’ve selected your use cases, you’ll likely jump into designing a model. But don’t worry — while your end goal for the model may change throughout the process, Pecan makes it easy to rapidly iterate and redesign models, so you don’t have to settle for a less-than-optimal model. Test out models quickly, and if they don’t click for your use case, try out a new one.

4. Decide how you’ll measure success

Once you’ve sketched out the goals for your project, you need to identify how you plan to measure them. To do this, you may want to select a few relevant KPIs that are already measured by your organization.

If you’re looking to lower churn over the next six months, you’ll keep an eye on churn rates on at least a monthly basis. If you’re looking to reach new audiences, you may want to track demographic expansion or the conversion rates of a specific new segment.

Some goals are more qualitative. For example, if you’re looking to increase AI literacy within your own organization through your initiative, you’ll need to take an initial measure of AI literacy. This could be in the form of a survey or pre-test. Once your initiative launches, you’ll want to resurvey your team to see if there’s growth.

Our recommendation is to compare your approach after predictive modeling to the approach you used prior to predictive modeling rather than compare your approach after predictive modeling to the model’s predictions. This will demonstrate whether or not you’re moving in the right direction.

A note on accuracy

It’s tempting to measure your predictive model’s success based on its accuracy. How close is the model’s prediction to what happened in reality? However, accuracy isn’t always the most telling metric.

For example, consider a model that predicts fraudulent transactions. If illegal transactions represent 1% of transactions and you have a model that deems all transactions as legitimate, it would be 99% accurate. However, this isn’t helpful to the business.

5. Do you have a way to act on the results of your model’s predictions?

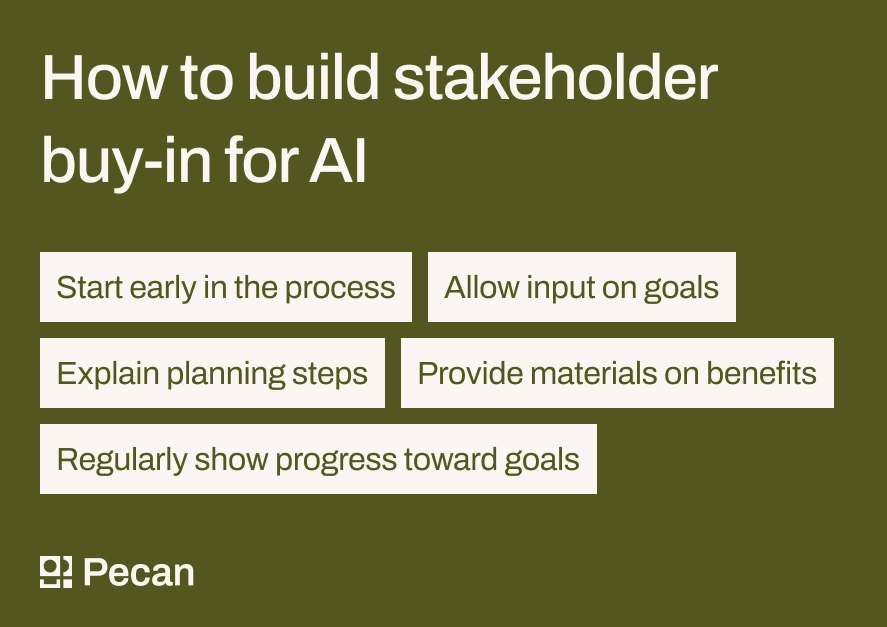

You’re not running an AI initiative just for the sake of it; you want to see your tools positively impact your organization’s processes and outcomes. That means it’s important to plan a route of action once your model has made its predictions. To do this, you’ll need to have stakeholder buy-in.

How to get stakeholder buy-in for AI projects

Stakeholder buy-in means that higher-ups and relevant parties are ready to take action and approve initiatives that are informed by your predictive model’s outputs. Great stakeholder buy-in can be fostered by:

- Addressing stakeholders early in the process

- Providing thorough materials that demonstrate the benefits of the initiative

- Allowing stakeholders to weigh in on goal-setting

- Keeping stakeholders updated throughout your initiative by citing progress toward goals

- Explaining what possible steps will be necessary so they have time to budget and plan for potential initiatives

6. Are you able to address ethical and regulatory concerns like bias, compliance, and security?

A final question to ask yourself before launching your machine learning initiative encompasses ethical and regulatory concerns. Whenever you’re using technology, you need to make sure you’re using it in a safe and protected way.

One major cornerstone of ethical AI usage is bias removal. To steer clear of bias within your model, we suggest a few different approaches, including:

- Using automated feature engineering

- Plugging comprehensive, trusted data sets into your model

- Continuously monitoring outputs for signs of bias and making adjustments as needed

When it comes to compliance and security, we recommend using a predictive analytics tool designed to protect sensitive information. Before you select a tool, check for security and compliance certifications and read up on how it handles your customers’ data.

Pecan emphasizes data safety in many ways. First, our tool never requires PII for modeling. Pecan is also ISO 27001 and SOC Type II certified, demonstrating our commitment to security and compliance. Learn more about our security protocols here.

Ready to launch your next machine learning project?

Undertaking any AI initiative is no easy task. If you want a successful initiative, you and your team need to be aware, engaged, and ready to implement new ideas based on your models’ conclusions. Using the questions above as a checklist before you launch is a great way to make sure your initiative goes off without a hitch.

To take advantage of the most intuitive predictive analytics tool on the market, schedule a demo with our team today.