In a nutshell:

- Data analysts and scientists must manage model complexity to avoid underfitting and overfitting.

- Underfitting occurs when models are oversimplified, while overfitting happens with overly complex models.

- Mitigating underfitting involves adding complexity, while preventing overfitting requires reducing complexity.

- Balancing bias and variance is crucial in model design to avoid underfitting and overfitting.

- Best practices include feature engineering, regularization, ensemble learning, and cross-validation to manage model complexity effectively.

Overfitting and underfitting – the Goldilocks conundrum of machine learning models. Just like in the story of Goldilocks and the Three Bears, finding the perfect fit for your model is a delicate balance. Overfit, and your model becomes a hangry, overzealous learner, memorizing every nook and cranny of the training data, unable to generalize to new situations. Underfit, and your model resembles a lazy, underprepared student, failing to grasp even the most basic patterns in the data.

Understanding the concepts of underfitting (oversimplified models) and overfitting (overly complex models) is crucial in building robust and generalized predictive models that perform well on unseen data.

In this blog post, we’ll explore the intricate dance between overfitting and underfitting, arming you with the knowledge to tame your models and find that sweet spot – the “just right” fit that will have your model performing like a well-trained, highly adaptable prodigy.

We’ll help you strike the right balance to build predictive models and avoid common pitfalls. These key strategies for mastering model complexity will help enhance the performance of your predictive analytics models.

Underfitting: Recognizing and Addressing Oversimplified Models

Often, in the quest to avoid overfitting issues, it’s possible to fall into the opposite trap of underfitting. Underfitting, in simplest terms, occurs when the model fails to capture the underlying pattern of the data. It is also referred to as an oversimplified model, as it does not have the required complexity or flexibility to adapt to the data’s nuances.

Underfitting typically occurs when the model is too simple or when the number of features (variables used by the model to make predictions) is too few to represent the data accurately. It can also result from using a poorly specified model that does not properly represent relationships among data.

In practical terms, underfitting is like trying to predict the weather based solely on the season. Sure, you might have a rough idea of what to expect, but the reality is far more complex and dynamic. You’re likely to miss cold snaps in spring or unseasonably warm days in winter. In this analogy, the season represents a simplistic model that doesn’t take into account more detailed and influential factors like air pressure, humidity, and wind direction.

Similarly, underfitting in a predictive model can lead to an oversimplified understanding of the data. It’s like trying to paint a detailed landscape with a broad brush – the finer elements are missed, and while the overall picture might roughly represent reality, it lacks the nuance and detail needed for accurate prediction.

For instance, in healthcare analytics, an underfit model might overlook subtle symptoms or complex interactions between various health factors, leading to inaccurate predictions about patient outcomes. In a business scenario, underfitting could lead to a model that overlooks key market trends or customer behaviors, leading to missed opportunities and false predictions.

The Impact of Underfitting on Model Performance

Underfitting significantly undermines a model’s predictive capabilities. Since the model fails to capture the underlying pattern in the data, it does not perform well, even on the training data. The resulting predictions can be seriously off the mark, leading to high bias. The real danger of underfitting lies in its impact on generalization. It means the model is incapable of making reliable predictions on unseen data or new, future data.

When underfitting occurs, the model fails to establish key relationships and patterns in the data, making it unable to adapt to or correctly interpret new, unseen data. This results in consistently inaccurate predictions.

Underfitting can lead to the development of models that are too generalized to be useful. They may not be equipped to handle the complexity of the data they encounter, which negatively impacts the reliability of their predictions. Consequently, the model’s performance metrics, such as precision, recall, and F1 score, can be drastically reduced.

To make matters worse, underfitting can also discourage the production of actionable insights, which are critical in decision-making processes in various fields, from business to healthcare, engineering, and more. Hence, the consequences of underfitting extend beyond mere numbers, affecting the overall effectiveness of data-driven strategies.

Mitigating Underfitting Through Feature Engineering and Selection

Addressing underfitting often involves introducing more complexity into your model. This could mean using a more complex algorithm, incorporating more features, or employing feature engineering techniques to capture the complexities of the data.

One common technique is expanding your feature set through polynomial features, which essentially means creating new features based on existing ones. Alternatively, increasing model complexity can also involve adjusting the parameters of your model.

Ultimately, the key to mitigating underfitting lies in understanding your data well enough to represent it accurately. This requires keen data analytics skills and a good measure of trial and error as you balance model complexity against the risks of overfitting. The correct balance will allow your model to make accurate predictions without becoming overly sensitive to random noise in the data.

Overfitting: Managing Overly Complex Models

At the other end of the spectrum from underfitting is overfitting, another common pitfall in managing model complexity. Overfitting happens when a model is excessively complex or overly tuned to the training data. These models have learned the training data well, including its noise and outliers, that they fail to generalize to new, unseen data.

An overfit model is overoptimized for the training data and consequently struggles to predict new data accurately. Overfitting often arises from overtraining a model, using too many features, or creating too complex a model. It could also result from failing to apply adequate regularization during training, which prevents the model from learning unnecessary details and noise.

The Impact of Overfitting on Model Performance

Overfitting significantly reduces the model’s ability to generalize and predict new data accurately, leading to high variance. While an overfit model may deliver exceptional results on the training data, it usually performs poorly on test data or unseen data because it has learned the noise and outliers from the training data. This impacts the overall utility of the model, as its primary goal is to make accurate predictions on new, unseen data.

It must be noted that the initial signs of overfitting may not be immediately evident. While the model initially shows excellent performance on the training data, which might lead one to believe it is performing exceedingly well, its predictive accuracy begins to suffer once it is applied to new, unseen data.

Due to its high sensitivity to the training data (including its noise and irregularities), an overfit model struggles to make accurate predictions on new datasets. This is often characterized by a wide discrepancy between the model’s performance on training data and test data, with impressive results on the former but poor results on the latter. Simply put, the model has essentially ‘memorized’ the training data, but failed to ‘learn’ from it in a way that would allow it to generalize and adapt to new data successfully.

Preventing Overfitting Using Dimensionality Reduction, Regularization Methods, and Ensemble Learning

There are several techniques to mitigate overfitting. Dimensionality reduction, such as Principal Component Analysis (PCA), can help to pare down the number of features thus reducing complexity. Regularization methods, like ridge regression and lasso regression, introduce a penalty term in the model cost function to discourage the learning of a more complex model.

Ensemble learning methods, like stacking, bagging, and boosting, combine multiple weak models to improve generalization performance. For example, Random forest, an ensemble learning method, decreases variance without increasing bias, thus preventing overfitting.

The key to avoiding overfitting lies in striking the right balance between model complexity and generalization capability. It is crucial to tune models prudently and not lose sight of the model’s ultimate goal—to make accurate predictions on unseen data. Striking the right balance can result in a robust predictive model capable of delivering accurate predictive analytics.

Striking the Right Balance: Building Robust Predictive Models

The ultimate goal when building predictive models is not to attain perfect performance on the training data but to create a model that can generalize well to unseen data. Striking the right balance between underfitting and overfitting is crucial because either pitfall can significantly undermine your model’s predictive performance.

Evaluating Model Performance and Generalization

Before improving your model, it is best to understand how well your model is currently performing. Model evaluation involves using various scoring metrics to quantify your model’s performance. Some common evaluation measures include accuracy, precision, recall, F1 score, and the area under the receiver operating characteristic curve (AUC-ROC).

The model’s ability to generalize, however, is of greater importance. This can be estimated by splitting the data into a training set hold-out validation set. The model is trained on the training set and evaluated on the validation set. A model that generalizes well should have similar performance on both sets.

Balancing Bias and Variance in Model Design

One way to conceptualize the trade-off between underfitting and overfitting is through the lens of bias and variance. Bias refers to the error introduced by approximating real-world complexity with a simplified model—the tendency to learn the wrong thing consistently. Variance, on the other hand, refers to the error introduced by the model’s sensitivity to fluctuations in the training set—the tendency to learn random noise in the training data.

A model with high bias is prone to underfitting as it oversimplifies the data, while a model with high variance is prone to overfitting as it is overly sensitive to the training data. The aim is to find a balance between bias and variance such that the total error is minimized, which leads to a robust predictive model.

A useful visualization of this concept is the bias-variance tradeoff graph. On one extreme, a high-bias, low-variance model might result in underfitting, as it consistently misses essential trends in the data and gives oversimplified predictions. On the other hand, a low-bias, high-variance model might overfit the data, capturing the noise along with the underlying pattern.

This extreme sensitivity to the training data often negatively affects its performance on new, unseen data. As such, selecting the level of model complexity should be done thoughtfully. You could start with a simpler model and gradually increase its complexity while monitoring its performance on a separate validation set.

You must note that bias and variance are not the only factors influencing model performance. Other considerations, such as data quality, feature engineering, and the chosen algorithm, also play significant roles. Understanding the bias-variance tradeoff can provide a solid foundation for managing model complexity effectively.

Best Practices for Managing Model Complexity

Managing model complexity often involves iterative refinement and requires a keen understanding of your data and the problem at hand. It includes choosing the right algorithm that suits the complexity of your data, experimenting with different model parameters, and using appropriate validation techniques to estimate model performance.

Feature engineering and selection can also improve model performance by creating meaningful variables and discarding unimportant ones. Regularization methods and ensemble learning techniques can be employed to add or reduce complexity as needed, leading to a more robust model.

Finally, cross-validation can be used to tune parameters and assess the resulting model performance across different subsets of the data. This allows you to evaluate how well your model generalizes and helps prevent underfitting and overfitting.

By understanding, identifying, and addressing issues of underfitting and overfitting, you can effectively manage model complexity and build predictive models that perform well on unseen data. Remember, the goal is not to create a perfect model but a useful one.

Bottom Line

Mastering model complexity is an integral part of building robust predictive models. Applying these techniques will help you build models that perform well on unseen data while avoiding the pitfalls of underfitting and overfitting. As a data analyst or data scientist, your invaluable skills and efforts in managing model complexity will drive the success of predictive analytics endeavors. So, keep learning, experimenting, and striving for better, more accurate models.

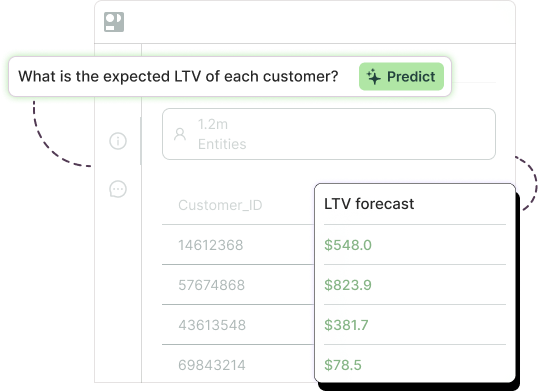

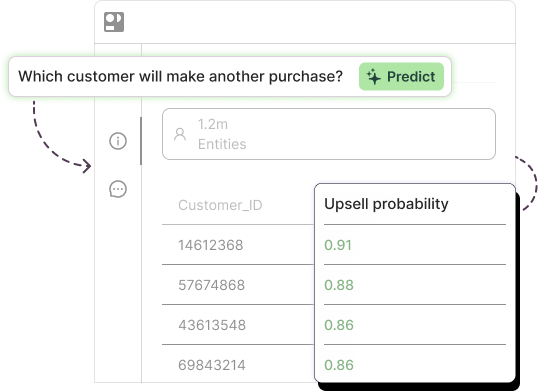

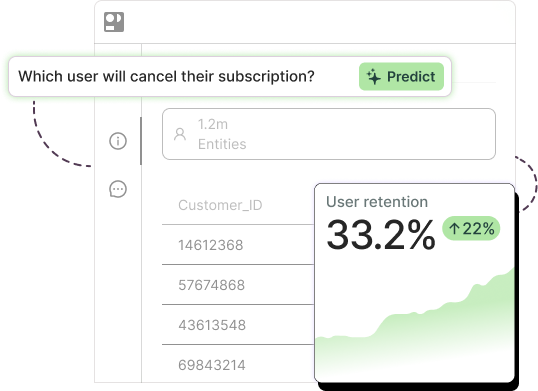

Ready to start building your “just right” predictive model now? Get a tour of Pecan today.